LunarLab by Frontier Development Lab (FDL) applies AI technologies to the lunar environment to push the frontiers of research and develop new tools to help solve some of the biggest challenges that lunar exploration may face.

LunarLab is a partnership between the Luxembourg Space Agency (LSA), the European Space Resources Innovation Centre (ESRIC), and Trillium Technologies. Developed with compute and technical support from Scan, NVIDIA, Google Cloud and specialist geology skills from Datarock.

LunarLab develops AI-driven tools for lunar science and exploration. Users engage with LunarLab in different ways, from scientists validating models to developers integrating data into new tools. By mapping their goals, needs and measures of success, LunarLab ensures its output is practical, valuable and inspiring for all.

Project Background

As international space agencies plan for a sustained human presence on the Moon, the ability to locate and effectively utilise in-situ resources becomes critical. Key lunar resources such as ice and oxygen are bound within compounds of titanium, iron and aluminium oxides — however these are unevenly distributed across the lunar surface and mostly inferred from disparate orbital sensor data. Furthermore, given that lunar observation is carried out by a vast number and range of instruments, several challenges arise when attempting to gain a comprehensive view.

The majority of recorded data is fragmented, as its sources are so numerous. A single lunar satellite may have various instruments including cameras, altimeters, spectrometers and radars — and there are many individual satellites that complicate the picture. The data ranges from optical and spectroscopic imagery to geophysical measurements such as gravity, and from thermal emissions to topography and reflectivity measurements. These datasets are typically stored in separate repositories in incompatible formats due to the differing data types and spatial resolutions.

The current best practice has resulted in the United States Geological Survey (USGS) Unified Geologic Map of the Moon — a product of time-consuming interpretation of datasets — but one that faces limitations due to human bias and cognitive restrictions. Some machine learning and deep learning approaches have yielded better models, but only in a single modality. The LunarLab team wanted to improve on these efforts and design a multi-modal foundation model combining the wide range of disparate data sources.

Project Approach

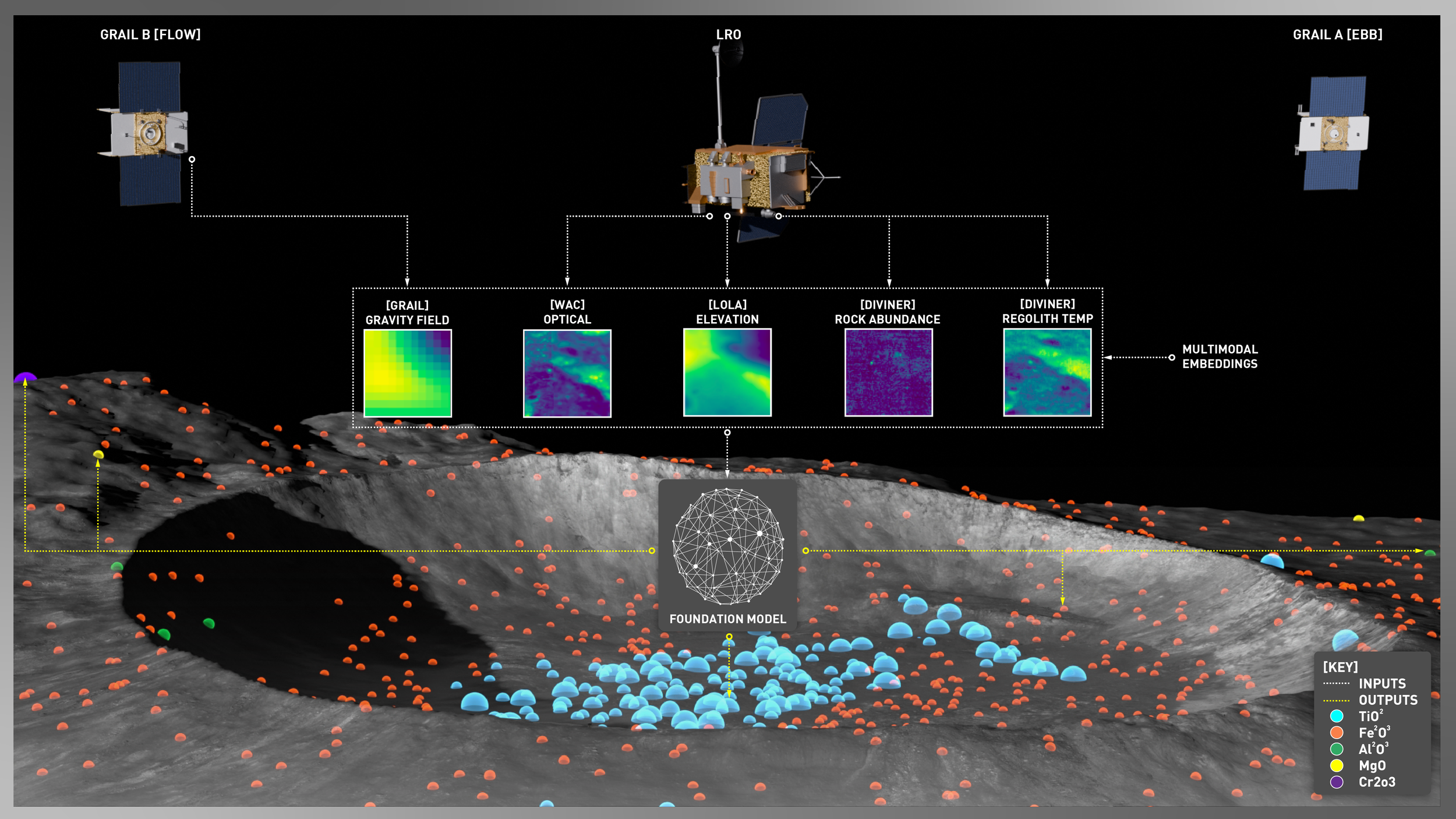

In order to begin developing the foundation model, the LunarLab team turned to numerous static lunar maps collected from the Lunar Reconnaissance Orbiter (LRO), the Gravity Recovery and Interior Laboratory (GRAIL) mission and the Clementine Mineralogy Abundance Map. These datasets provided complementary views of the lunar surface and subsurface spanning 18 individual modalities, as listed in the table below.

| Mission | Instrument | Modalities | Channels | Description |

|---|---|---|---|---|

| LRO | Lunar Reconnaissance Orbiter Camera (LROC) | Multispectral Optical Imagery | 7 | UV to visible bands (321–689nm at ~400m/px) |

| Lunar Orbiter Laser Altimeter (LOLA) | Topographic Elevation | 1 | Digital Elevation Model (DEM) at ~118m/px | |

| Diviner Lunar Radiometer Experiment (DLRE) | Thermal Emission | 3 | Regolith temperature, rock abundance and bolometric temperature (at ~200–500m/px) | |

| Mini-RF | Radar | 2 | Circular Polarisation Ratio (CPR) at S-band (126cm) and X-band (4.2cm) at ~100–300m/px | |

| GRAIL | Lunar Gravity Ranging System | Gravitational Anomaly | 4 | Bouguer gravity anomaly (10–100km), crustal thickness, Water Equivalent Hydrogen (WEH) |

| CLEMENTINE | Ultraviolet-Visible Spectroscopy (UVVIS) | Reflectance | 1 | Spectral reflectance (at 750nm ~118m/px) |

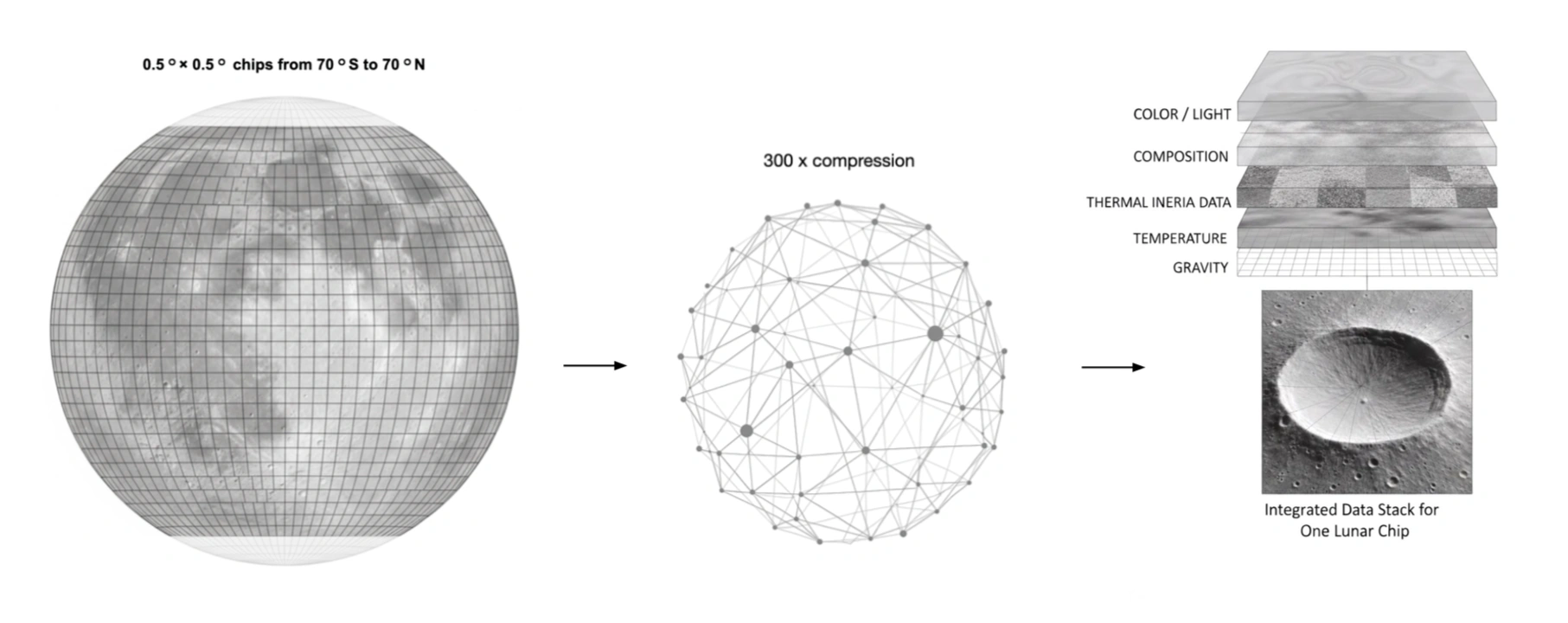

Each of these datasets was pre-processed to give them similar value ranges and then combined into a common cylindrical projection on a 0.5° grid covering the lunar surface between 70°S and 70°N latitude. The lunar poles were excluded due to incomplete coverage in some datasets. This surface was then cut into 201,600 chips covering approximately 32 x 32km each at the equator. Of these chips, the team reserved 70% for model training, 20% for validation and the remaining 10% for testing.

The team's approach was to stack all the data channels into a single large image and apply a multi-modal masked auto encoder (MultiMAE) that treated each modality independently within a shared architecture. Each modality had its input tile divided into 8x8 pixel patches, resulting in a set of tokens that acted as the input for a vision transformer (ViT) model with a vector size of 768. The final model ran to over 113 million parameters and was trained for 500,000 iterations using self-supervised deep learning techniques on three cloud-based NVIDIA H100 GPUs.

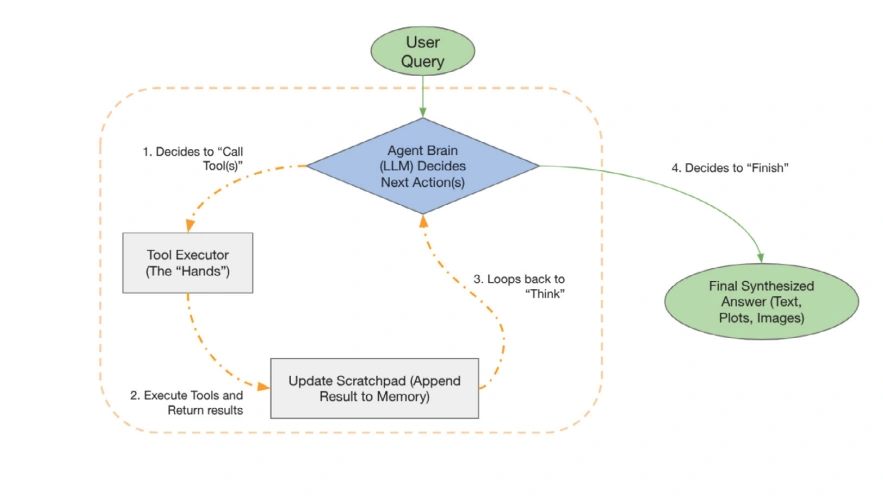

A further core objective of the foundation model was to make its outputs accessible for intuitive exploration. To achieve this, the team developed a sophisticated AI agent that acts as a natural language interface, enabling users to ask the Moon questions without needing to write code or understand the underlying datasets. A stateful, multi-step reasoning system was built using the LangGraph framework, defining a clear and robust workflow allowing the agent to dynamically plan and execute actions to fulfil a user's request.

The final objective was to make the model small enough that no advanced computing infrastructure was required for users.

Project Results

The resulting foundation model, named Lunar-FM, is a significant step forward in planetary science — proving to be a scalable, self-supervised method for integrating 18 heterogeneous data layers across five modalities (optical, topography, thermal, radar, gravity) into a cohesive model for the first time. Lunar-FM is a unified, information-dense 768-dimensional latent embedding space that represents global lunar properties at a 0.5° × 0.5° resolution. The team's achievement standardises data representation, achieving a 300x data compression while retaining rich semantic information required for diverse downstream tasks including terrain classification, anomaly detection, rare feature discovery and global mapping based on sparse samples.

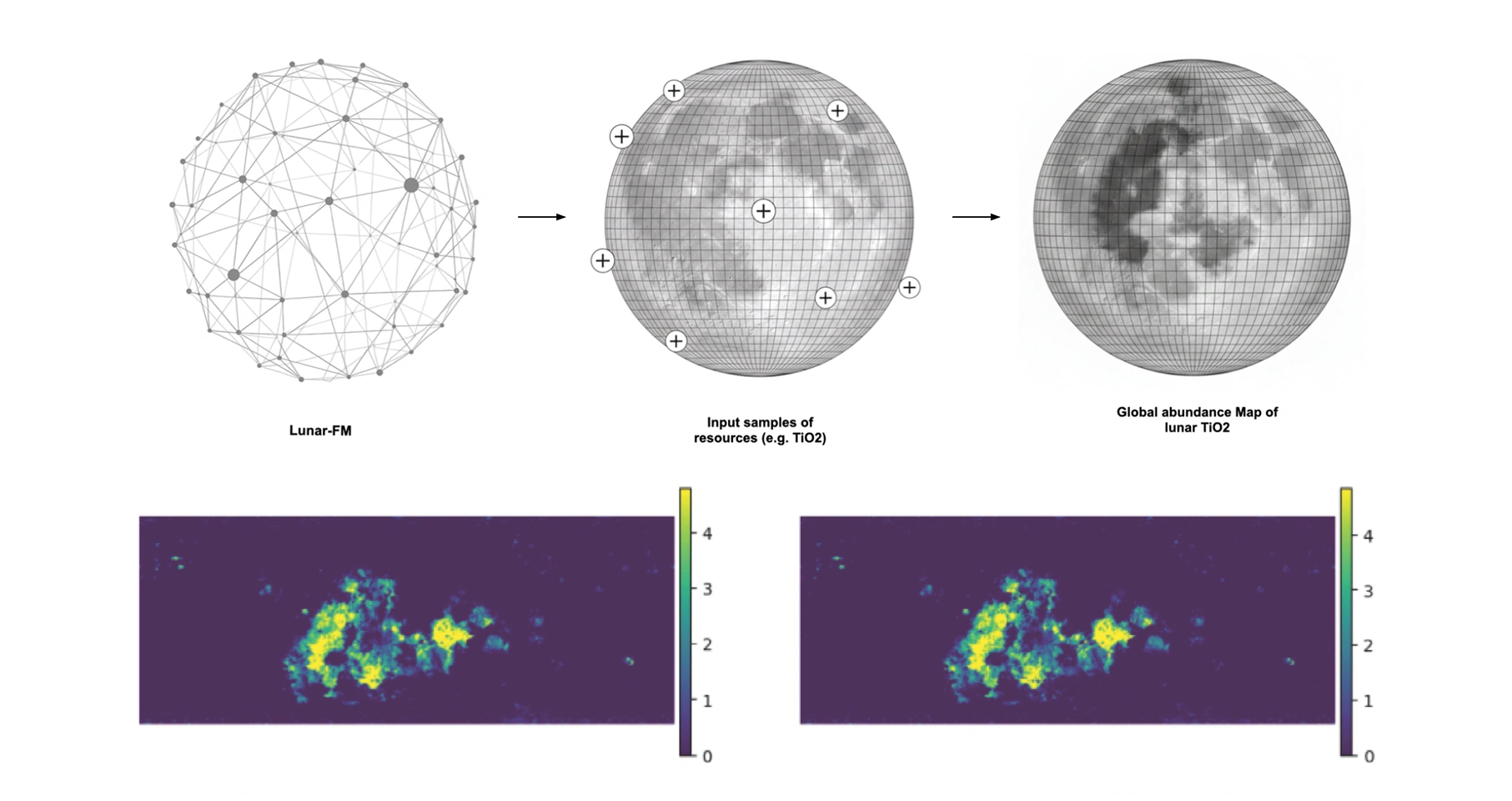

Due to the generalisable nature of a foundation model — that is, a single model can be adapted to many different downstream tasks — the team demonstrated this generalisability by using Lunar-FM to generate a global map of titanium dioxide (TiO₂) using only eight validated samples.

The agentic AI element, Lunar Analyst Copilot, bridges the gap between complex multidimensional lunar data and natural language queries by scientists, geologists and mission planners. The system utilises a Large Language Model (LLM) as its front-end, allowing users to interact with the vast lunar dataset using queries such as "where are the best places to land near the South Pole to search for water ice?". The LLM acts as an interpreter, translating the high-level human query into a specific sequence of executable data science tasks, understanding which underlying data modalities and models are required to synthesise an answer.

The capabilities of Lunar-FM support a range of critical applications for science, resource utilisation and mission planning, including:

- Identification of high-potential resource sites such as ilmenite-rich basalts, FeO-rich regolith or KREEP terrains rich in potassium, rare earth elements and phosphorus.

- Locating and characterising polar deposits, including mapping likely water-ice stability zones using thermal data.

- Selecting optimal regions of interest by combining topographical and physical constraints: slope, surface roughness, rock abundance and illumination conditions.

- Modelling thermal environments for lander, rover and habitat design, considering diurnal cycles, cold traps in permanently shadowed regions and overall thermal stress on hardware.

- Assessing regolith mechanical properties such as grain size and compaction to support excavation and in-situ resource utilisation (ISRU) operations.

- Detecting subsurface geological structures such as mass concentrations linked to mineral enrichment, primarily using gravity anomaly data.

- Estimating near-surface hydrogen abundance — a proxy for water content — for water extraction feasibility studies.

- Achieving a 20% performance boost in global titanium dioxide mapping using only ten expert-validated samples, illustrating how foundation models unlock planetary-scale insights with minimal overhead.

The potential to interact with the data via natural language democratises advanced analysis, providing easily operable tools for geologists and prospectors without requiring extensive coding expertise.

Conclusions

Lunar-FM acts as a translational bridge between pure scientific understanding of lunar processes and the operational requirements of missions. It converts theoretical knowledge into science-informed insights and resource-focused targets. As part of Lunar-FM’s evaluation, geologists have been able to improve lunar mapping continuous inclusion of new observational data from future missions such as Artemis presents an exciting possibility, as foundation models should not be static or standalone solutions. The agentic capabilities of Lunar-FM unlock a future where multiple models can work together to support researchers and surface operations.

You can learn more about LunarLab 2025 research and the Lunar-FM project by reading the LunarLab 2025 Results Booklet, where a summary, poster and full technical memorandum can be viewed and downloaded.

The Scan Partnership

Scan is a major supporter of LunarLab 2025 and FDL Europe, building on its participation in the previous five years' Earth Systems Lab events. As an NVIDIA Elite Solution Provider, Scan contributes multiple DGX supercomputers via Scan Cloud to facilitate much of the machine learning and deep learning development and training required during the research sprint.

Project wins

Successful development of Lunar-FM to address the fragmentation and heterogeneity of remote sensing data essential for lunar resource prospecting and scientific analysis

Successful overlay of the Lunar Analyst Copilot to enable easier interrogation of multiple data sources and models via a natural language interface

Fundamental enabler of data fusion, foundation model tokenisation and training, and LLM integration using NVIDIA DGX systems via Scan Cloud

James Parr

Founder, FDL / CEO, Trillium Technologies

"FDL has established an impressive success rate for applied AI research output at an exceptional pace. Research outcomes are regularly accepted to respected journals, presented at scientific conferences and have been deployed on NASA and ESA initiatives — and in space."

Glyn Merga

Head of Cloud Architecture, Scan

"We are proud to be continuing our work with FDL and NVIDIA to support the new LunarLab 2025 event. It is a huge privilege to be associated with such ground-breaking research efforts in light of the renewed focus on lunar missions in the near future."

Speak to an Expert

You've seen how Scan helped the LunarLab researchers and FDL further their research into moon mapping. Contact our expert AI team to discuss your project requirements.

Related content

View more case studies

ESL 2025 — 3D Clouds for Climate Extremes

Learn how Scan helped develop advanced cloud mapping for early disaster prediction.

Read More

ESL 2025 — StarCop 2.0: Atmospheric Anomaly Detection

Learn how Scan helped develop weak signal detection of transient phenomena like greenhouse gas leaks.

Read More

FDL Europe — Earth Systems Lab

Discover more about how Earth Systems Lab applies AI technologies to space science.

Read More