Earth Systems Lab (ESL) by FDL applies AI technologies to space science, pushing the frontiers of research, developing new tools to help solve some of the biggest challenges that humanity faces. These include the effects of climate change, predicting space weather, improving disaster response and identifying meteorites that could hold the key to the history of the universe.

FDL is a public-private partnership with the European Space Agency (ESA) and Trillium Technologies. It works with commercial partners such as Scan, NVIDIA, Google Cloud, IBM and Airbus, amongst others to provide expertise and the computing resources necessary for rapid experimentation and iteration in data intensive areas.

Project Background

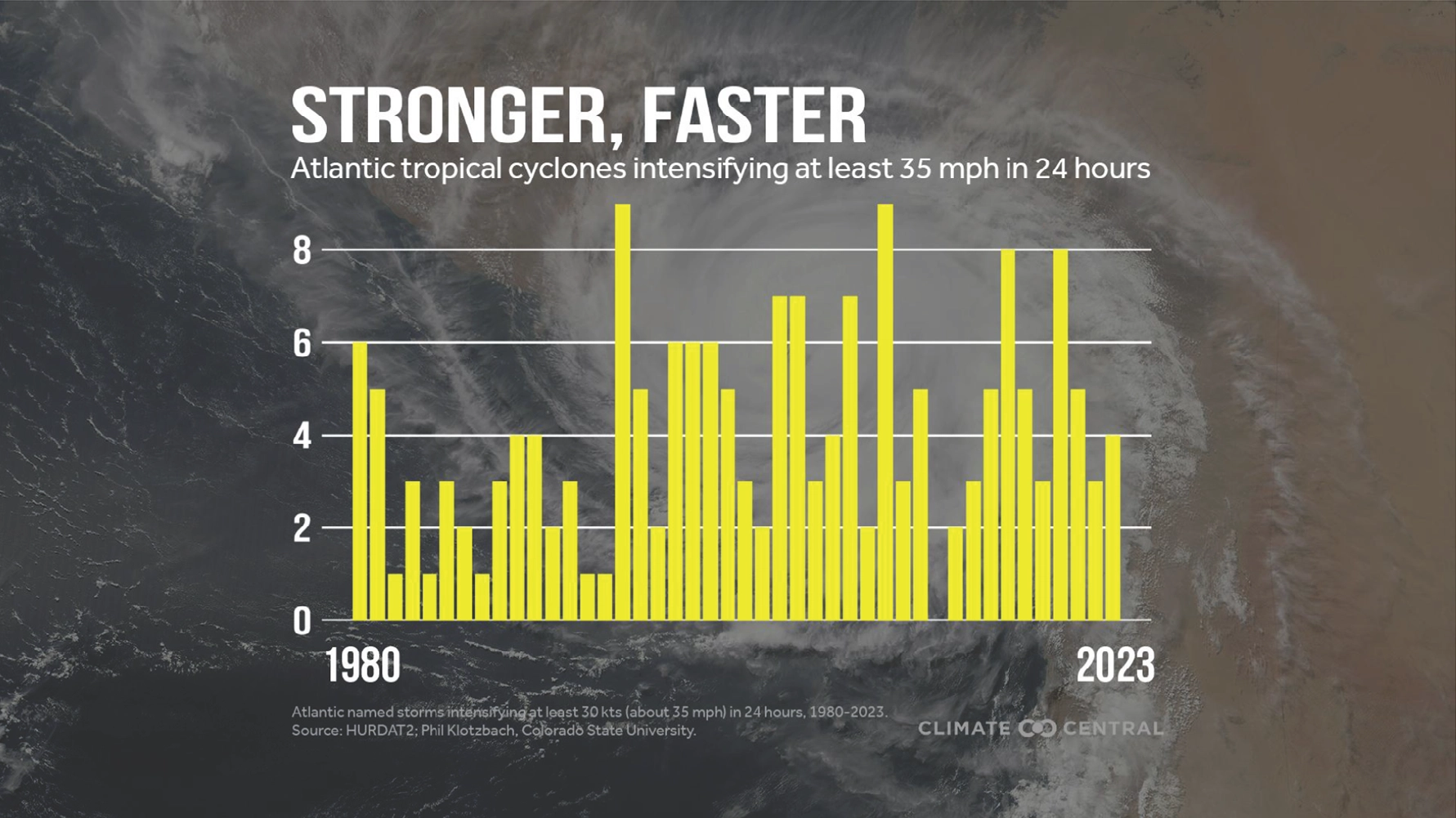

Predicting the intensity and path of tropical cyclones is a major challenge in meteorology. The devastating impacts of storms such as Hurricane Dorian in 2019, Hurricane Milton in 2024 and Hurricane Melissa in 2025, which rapidly intensified from Category 1 to Category 5 superstorms, highlights the critical need for more accurate forecasting. One key reason for this difficulty is that the crucial microphysical properties of cyclones, such as ice and water content, are inadequately represented in forecasting systems. Most satellite data only provides a two-dimensional view of cloud tops, so this lack of detailed information makes it difficult to understand the complex processes that drive cyclone intensification and to provide timely warnings to affected populations. In particular, challenges remain where winds increase more than 35mph (56kph) within a 24-hour period, and as the below graphic demonstrates, this attribute is becoming an increasing trend in hurricanes as climate change advances.

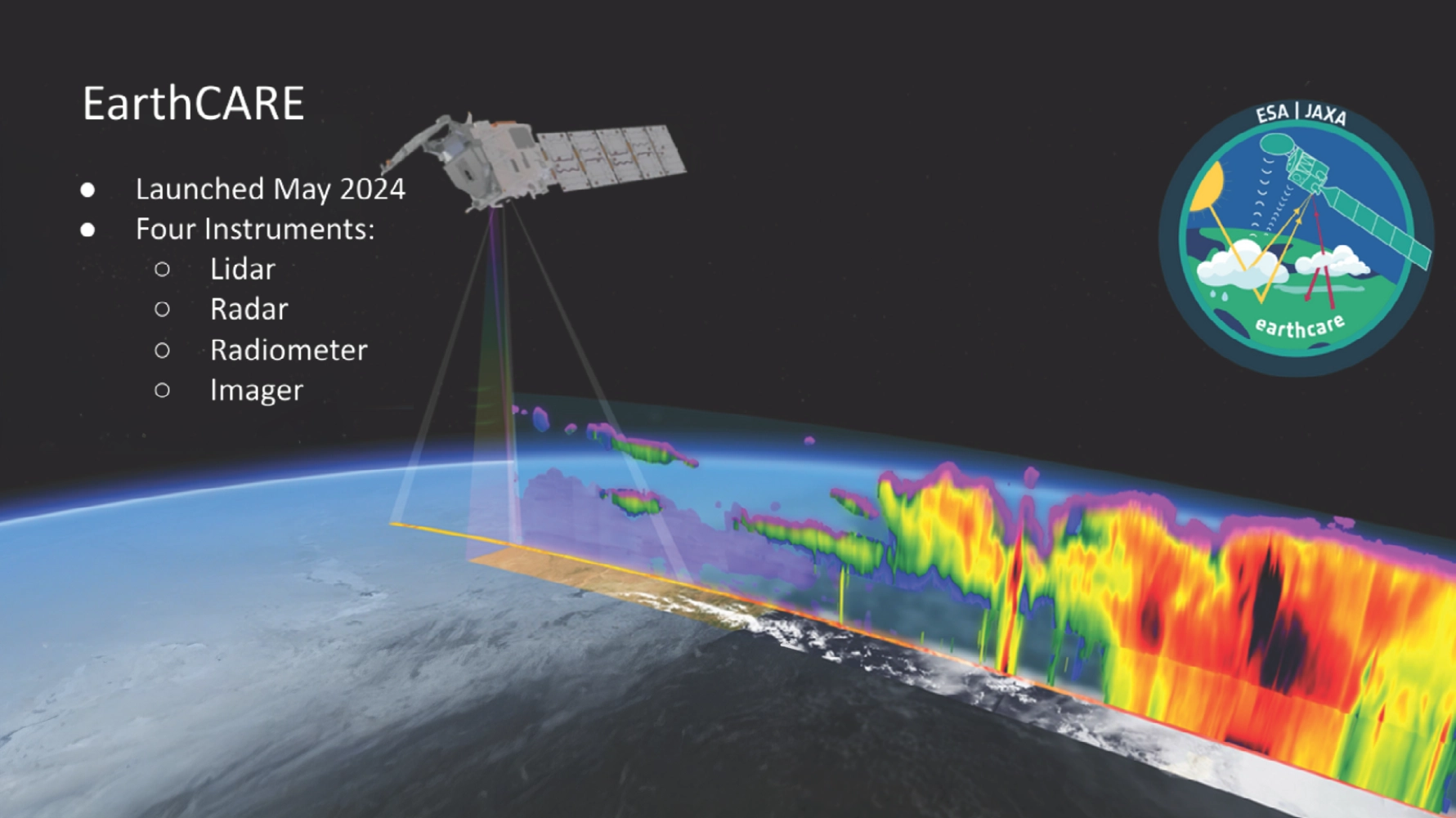

Building on Earth observation systems such as CloudSat, launched in 2006, recent years have seen great advancements in the study of climate change and weather forecasting, with the launch of EarthCARE (Earth Cloud Aerosol and Radiation Explorer) in 2024. This latest satellite is equipped with active and passive sensors that provide previously unavailable data of clouds and their processes across the globe. EarthCARE provides a global operational cloud product every 16 days, derived by merging its multi-spectral imagery with observations from its cloud profiling radar.

Following the EarthCARE launch, there is increased interest in combining active sensor data with imaging to extend the value of these measurements and gain scientific insight. The team sought to build on last years ESL 2024 3D CLOUDS USING MULTI-SENSORS study to showcase how machine learning and AI can be used to derive 3D cloud products from 2D imaging observations. They would create continuous, near global 3D cloud maps as a novel dataset for research - encompassing geometric structure and microphysical properties in three-dimensions. The ultimate aim being to compare their results with EarthCAREs output to assess the projects accuracy.

Project Approach

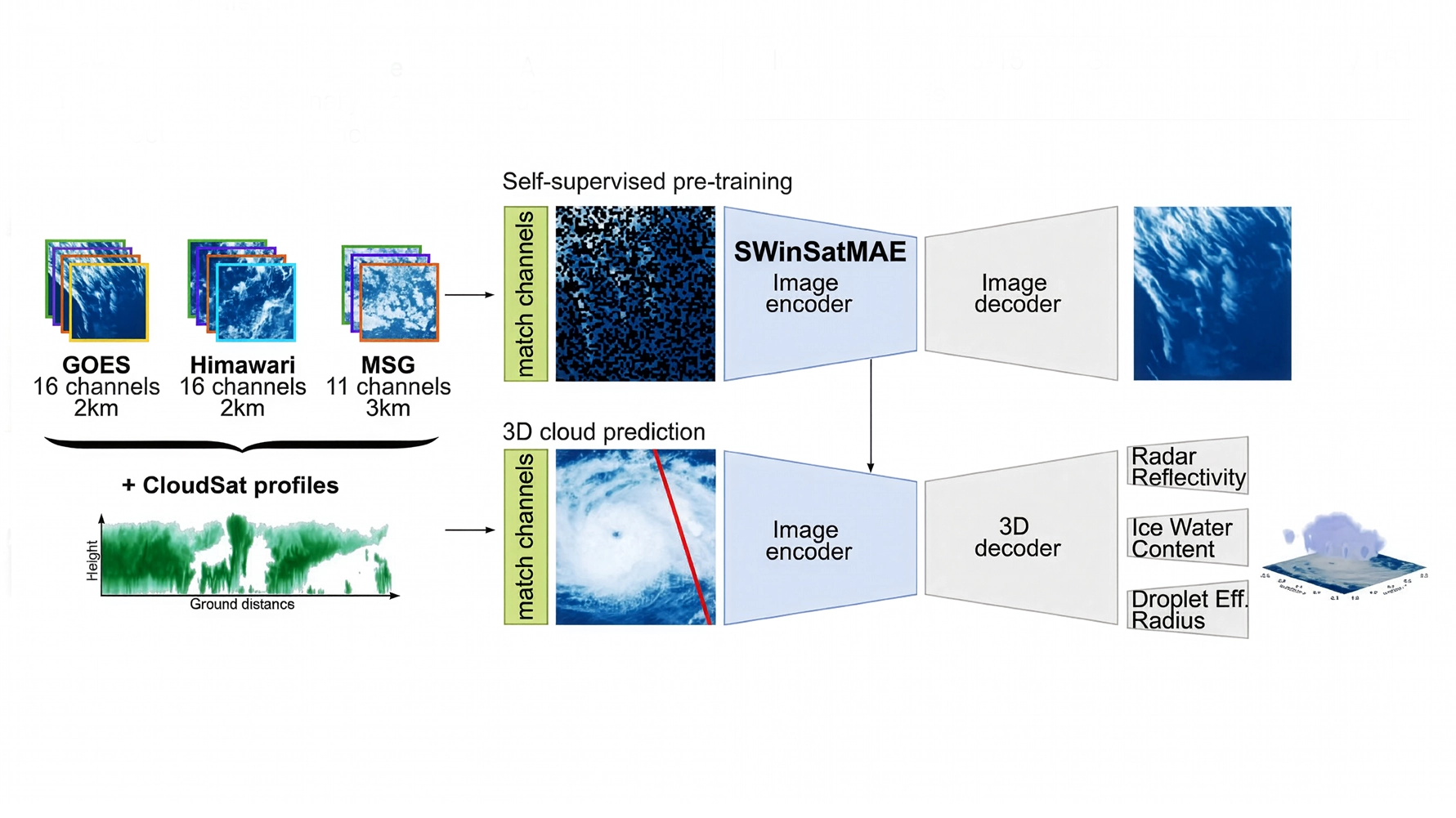

The team used input data from multiple satellite sources for both passive geostationary data and active radar data, in order to combine legacy and modern data. The geostationary data included ESA's MSG (MeteoSat Second Generation) Spinning Enhanced Visible and Infrared Imager (SEVIRI), the NASA (North American Space Agency) GOES (Geostationary Operational Environmental Satellite) Advanced Baseline Imager (ABI) and the Japanese Meteorological Agency's Advanced Himawari Imager (AHI). SEVIRI provided a long-term data record from 2004 to present day, whilst ABI and AHI are more modern providing data from 2015 and 2018 respectively.

The active data was obtained using vertical cloud profiles from NASA's CloudSat, available from 2006 to 2020. These sources were then combined with historic hurricane track data, so the new models could be created across a typical geospatial storm lifecycle.

The SEVIRI, ABI and AHI satellites provided reflectance and brightness temperature every 10-15 minutes, across numerous spectral channels and resolutions, across a view of 45°. The CloudSat data included radar reflectivity, ice water content and droplet radius. The team then prepared three AI-ready datasets from this combined satellite data - the first was a pre-training dataset of 150,000 random sampled patches of 1,024 x 1,024 pixels giving large-scale unlabelled data for self-supervised learning. The second is a cloud dataset that paired 80,000 256 x 256 pixel geostationary images with spatially and temporally aligned CloudSat overpasses creating matched image-profile data for supervised training. The third dataset was specific to tropical cyclones (including Dorian), matching their imagery with CloudSat overpass data, so the team had a way to evaluate model performance.

For self-supervised pre-training the team compared a standard vision transformer (ViT) masked auto encoder (SatMAE) with a SWin transformer-based masked auto encoder (SWinSatMAE). The SWin transformer backbone should offer two main advantages over a ViT - hierarchical feature extension and computational efficiency, however they can add unwanted artefacts due to their smaller attention window. Both models contained CloudSat spatial and temporal metadata which was used to evaluate success by masking 50-75% of the input imagery to task the model with reconstructing the missing image information. Each model was run for 50 and 100 epochs and all were compared to a baseline dataset (U-Net) resulting from a previous study carried out in 2020.

AI model development and training were carried out on a Scan Cloud GPU-accelerated server and and Google Cloud Platform GPU instances. Experiment racking, parameter logging and visualisation were conducted using the Weights & Biases (W&B) software platform.

Project Results

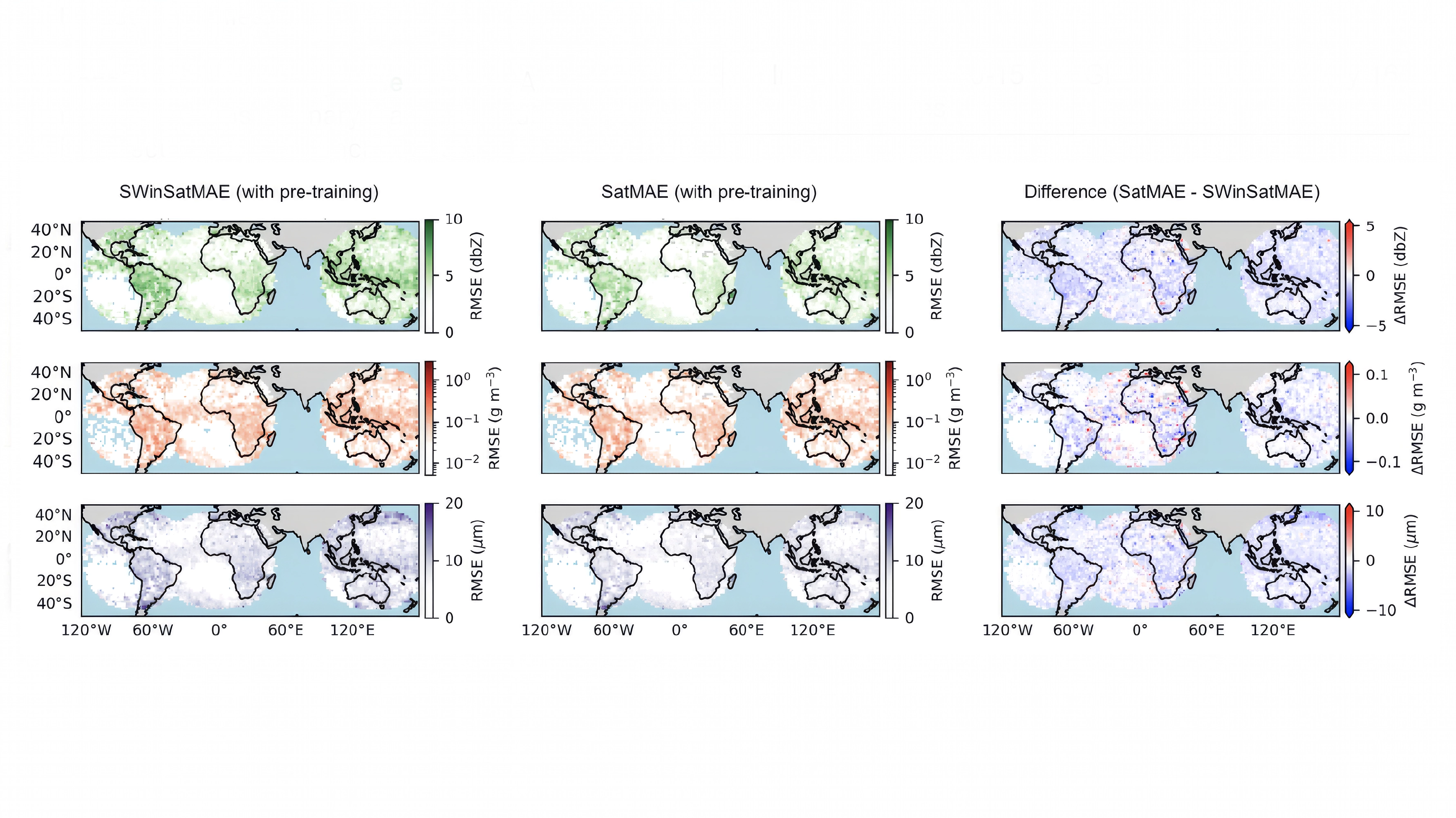

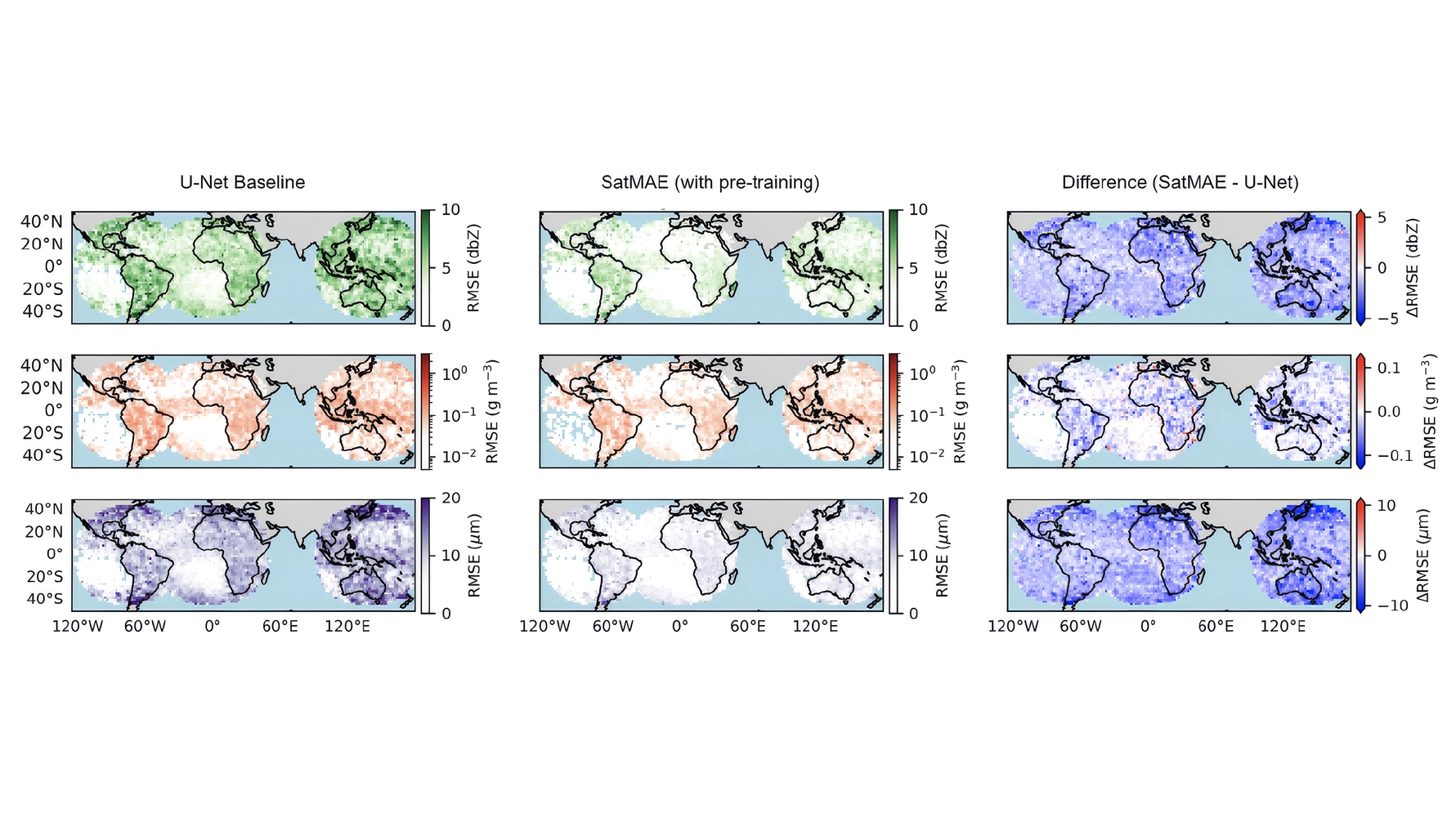

The team found that the SatMAE model without metadata performed better than the SWinSatMAE model, especially after fine tuning with 100 epochs, hypothesising that the smaller attention window of the SWin transformer was not sufficient to capture long-range correlations. The increased accuracy of the ViT SatMAE model proved worthwhile even considering the longer training times required (SWinMAE - 7 hours / SatMAE - 1 day for 50 epochs; Swin MAE 4 days / SatMAE 10 days for 100 epochs).

The below diagram shows spatial distributions of radar reflectivity (top), ice water content (middle), and droplet radius (bottom) predictions for the SWinSatMAE model (left) and the SatMAE model (middle) with the difference (right).

The best performing model, SatMAE (middle) is then shown against the baseline U-Net model (left) in the diagram below, indicating far superior performance.

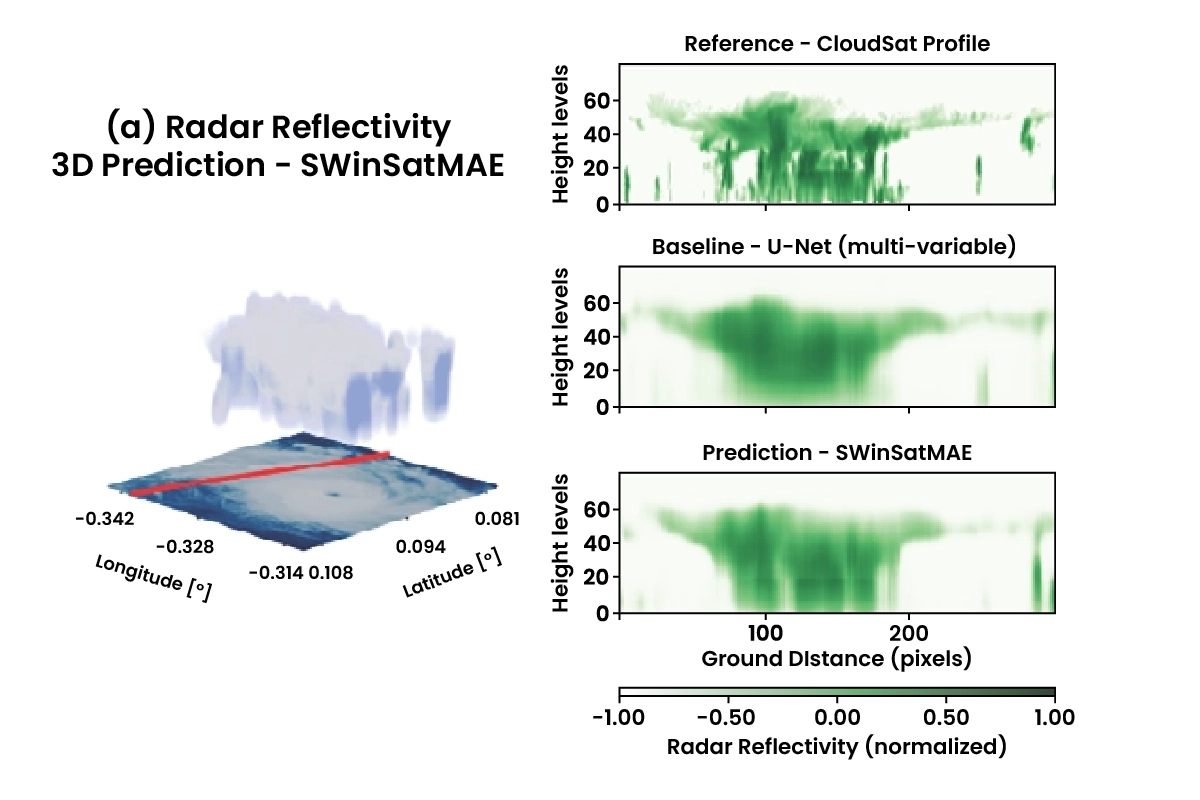

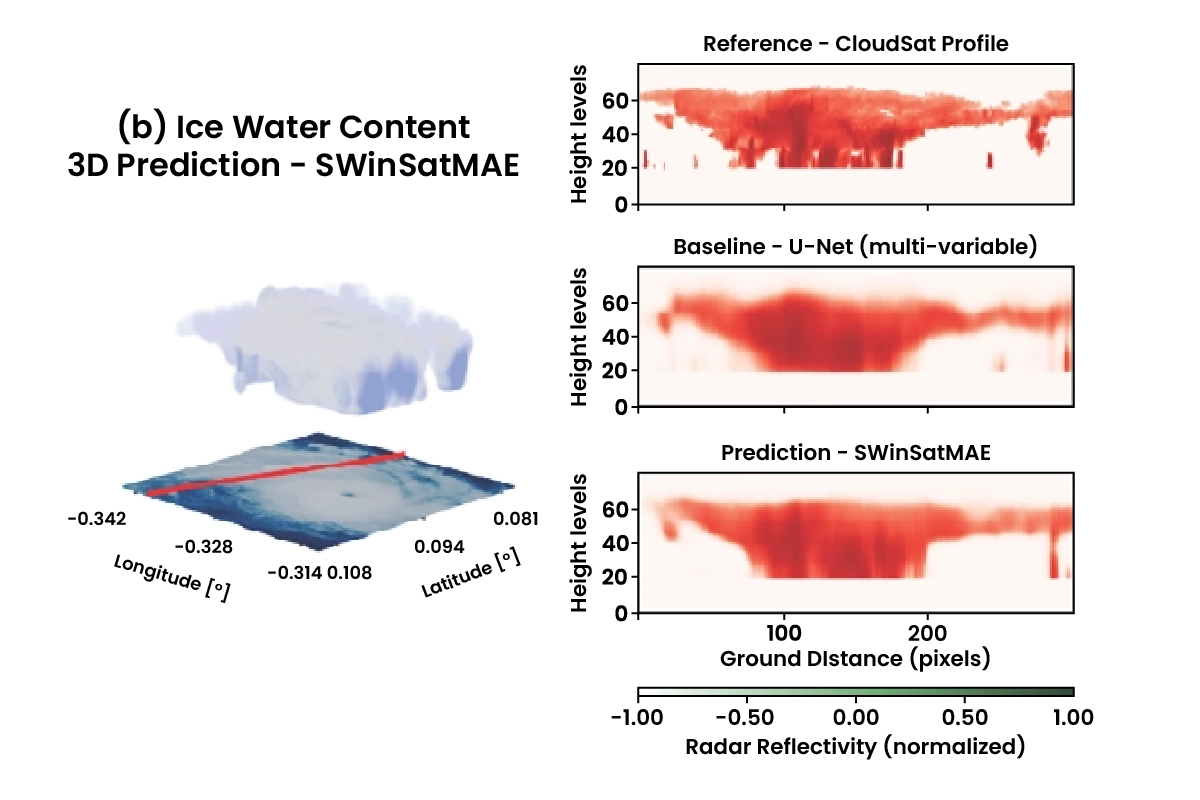

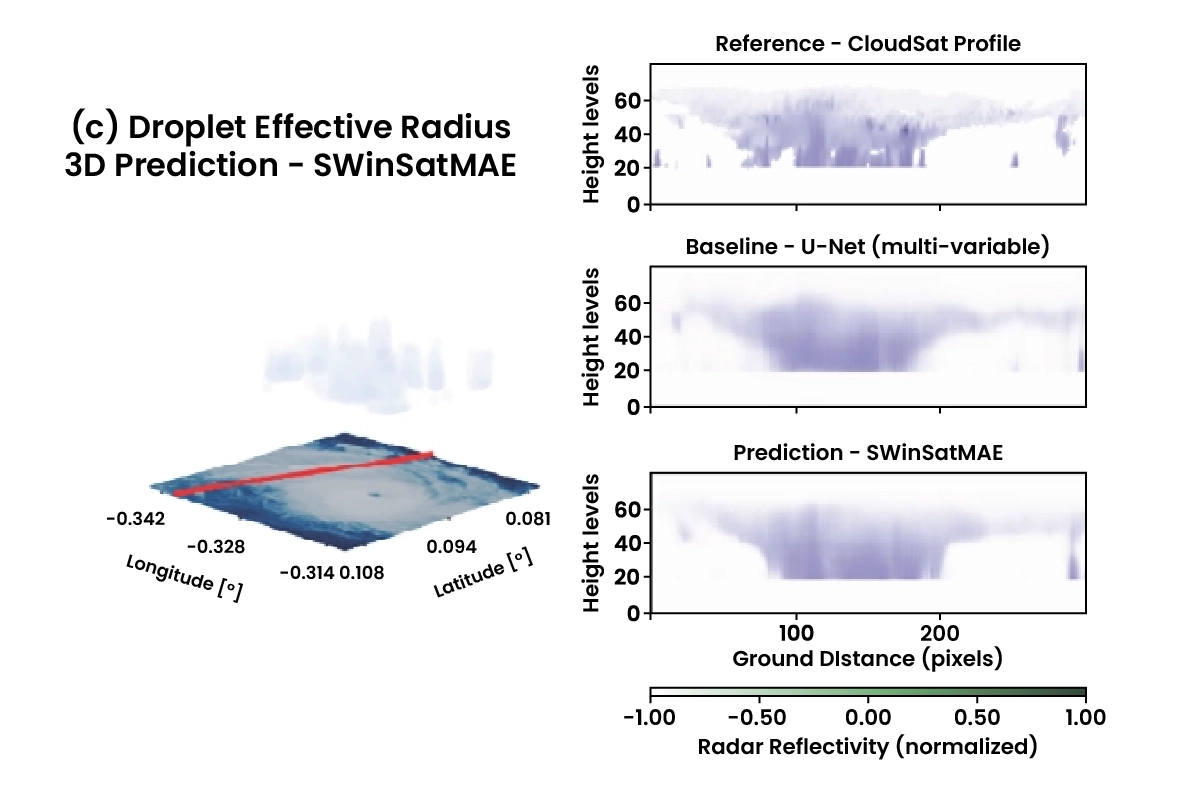

Using these results the team considered the case of Hurricane Dorian. It formed in the North Atlantic in August 2019 and was forecast to remain weak as it tracked towards the Caribbean, however it underwent rapid intensification over a few days making landfall in the Bahamas as a Category 5 storm. It caused an estimated $4.3 billion in damage and resulted in 67 fatalities. There were only two CloudSat overpasses of Dorian before it intensified, leading to forecasters underestimating the development of the storm. The team's model proved that the continuous monitoring of geostationary satellite data could cover the gaps in CloudSat's coverage. The diagram below shows 2D and 3D reconstructions of Hurricane Dorian's (a) radar reflectivity, (b) ice water content, and (c) effective radius by the SWinSatMAE model. The geostationary image from GOES channel 7 is shown under each generated 3D render, with the location of the CloudSat track marked in red.

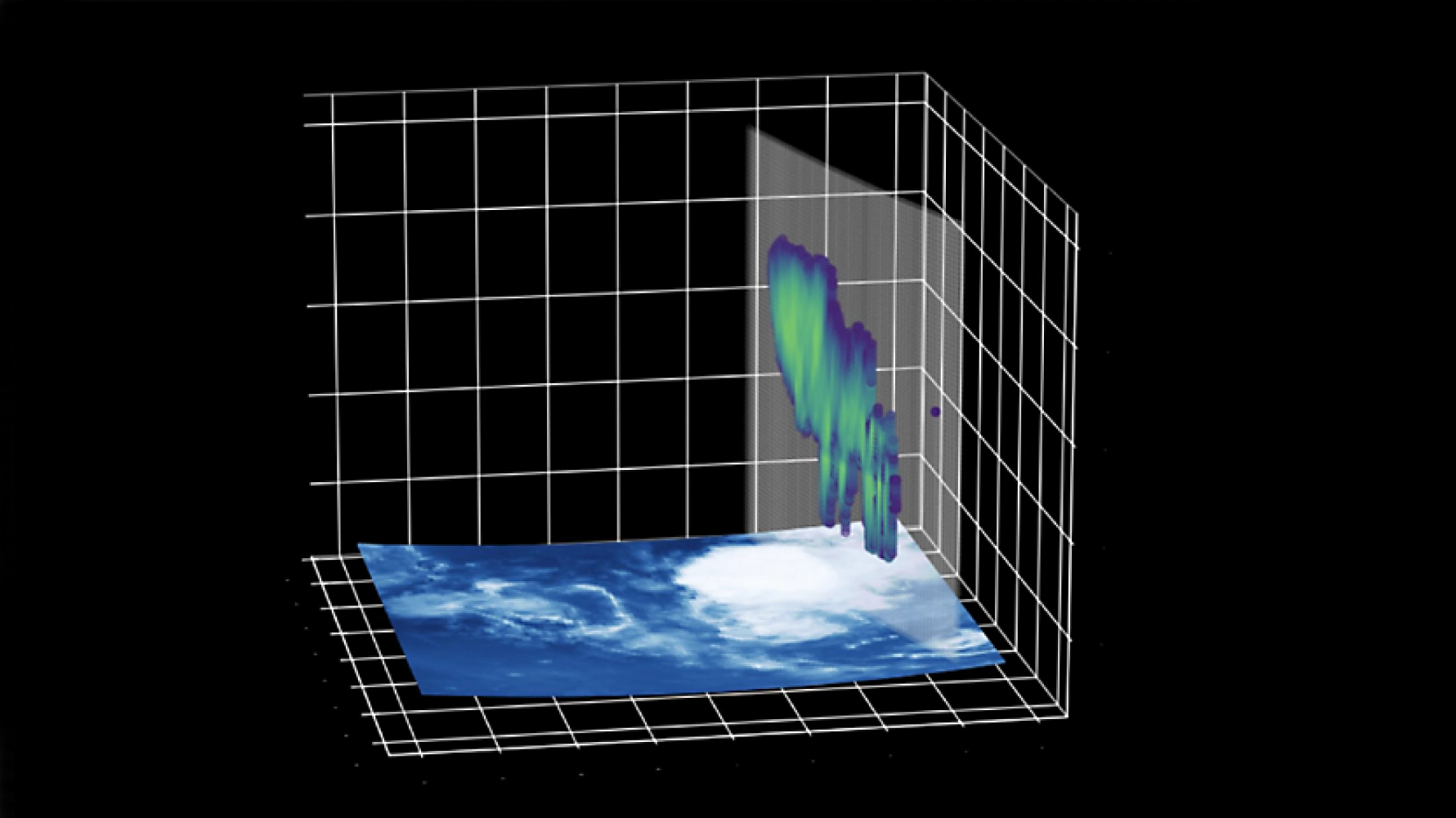

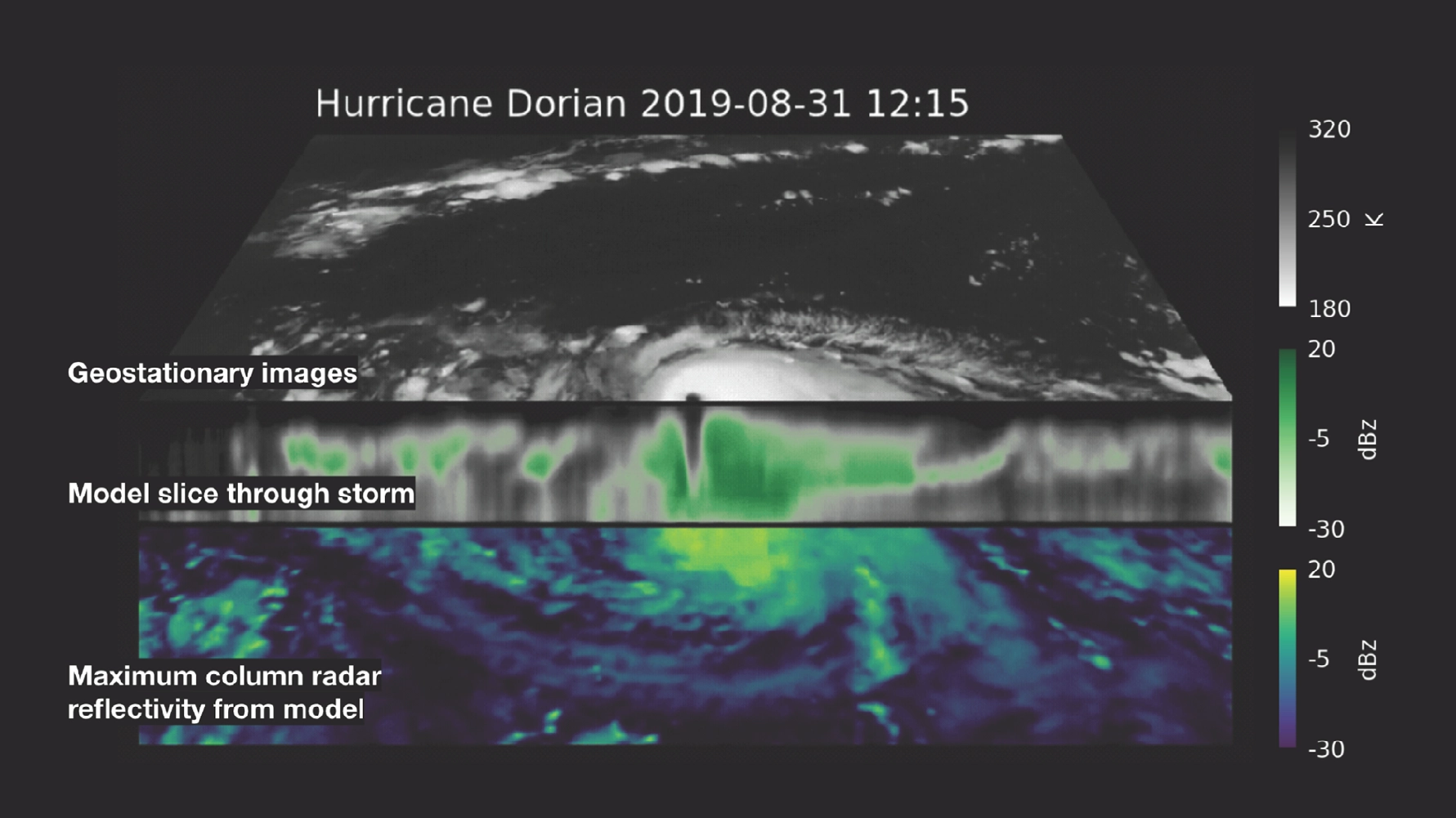

Reconstructions from this data were then used to make a 3D prediction of the hurricane at its peak intensity, as shown below as a slice though the storm centre.

All the reconstruction data was favourably comparable to the CloudSat and U-Net retrievals from the time.

Conclusion and Next Steps

The ESL 2025 team achieved showed the potential of providing continuous 3D modelling for cloud patterns in extremes climate events. They showed that multiple geostationary satellite data can be combined to improve predictions with a high degree of generalisation that could be employed for new satellites and their data. During the research cycle, in support of response efforts, the pipeline was triggered to create a near-real time 3D reconstruction of Hurricane Melissa.

In the future, the resultant 3D models can be enhanced with data from MTG (MeteoSat Third Generation) Flexible Combined Imager (FCI) and EarthCARE, where data starts from 2024 and 2025 respectively. The development of a fully sensor agnostic pipeline will not only allows for the inclusion of satellites unseen during training, which would expand the coverage of the model to fully global cloud monitoring, but also ensure the model is futureproof.

You can learn more about Earth Systems Lab 2025 research and this 3D Clouds for Climate Extremes project by reading the ESL 2025 RESULTS BOOKLET.

The Scan Partnership

Scan is a major supporter of ESL 2025 and FDL, building on its participation in the previous five years events. As an NVIDIA Elite Solution Provider, Scan contributes multiple DGX supercomputers via Scan Cloud, in order to facilitate much of the machine learning and deep learning development and training required during the research sprint period.

Project Wins

Successful demonstration of improved hurricane modelling using multiple geostationary data with high generalisation

Generation of three new AI-ready datasets that be used for future area of research

Access to dedicated DGX compute allowed for near-real time 3D reconstruction of Hurricane Melissa on demand

James Parr

Founder, FDL / CEO, Trillium Technologies

"FDL has established an impressive success rate for applied AI research output at an exceptional pace. Research outcomes are regularly accepted to respected journals, presented at scientific conferences and have been deployed on NASA and ESA initiatives - and in space."

Glyn Merga

Head of Cloud Architecture, Scan

"We are proud to be continuing our work with FDL and NVIDIA to support the ESL 2025 event for the sixth year running. It is a huge privilege to be associated with such ground-breaking research efforts in light of the challenges we all face when it comes to life-changing events like climate change and extreme weather."

Speak to an expert

You've seen how Scan continues to help the Earth Systems Lab and FDL further its research into the climate change and space. Contact our expert AI team to discuss your project requirements.

phone_iphone Phone: 01204 474210

mail Email: [email protected]