Earth Systems Lab (ESL) by FDL applies AI technologies to space science, pushing the frontiers of research, developing new tools to help solve some of the biggest challenges that humanity faces. These include the effects of climate change, predicting space weather, improving disaster response and identifying meteorites that could hold the key to the history of the universe.

FDL is a public-private partnership with the an Space Agency (ESA) and Trillium Technologies. It works with commercial partners such as Scan, NVIDIA, Google Cloud, IBM and Airbus, amongst others to provide expertise and the computing resources necessary for rapid experimentation and iteration in data intensive areas.

Project Background

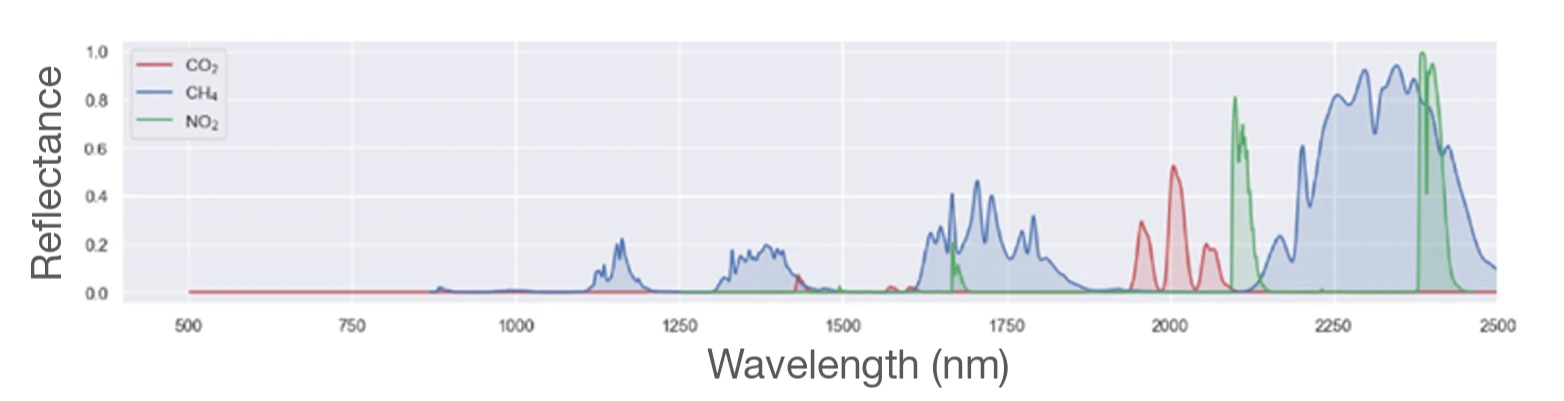

The accelerating rate of global warming, largely driven by greenhouse gasses and other pollutants, demands a faster and more efficient way to detect and track these emissions. Methane, in particular, has a warming potential 84 times that of carbon dioxide, and its short atmospheric lifetime makes rapid leak detection and remedial action critical - especially in the case of super emitters such as the oil and gas industry. Satellites can help identify methane plumes, by observing the light reflected by the Earth's surface after it traverses through the atmosphere.

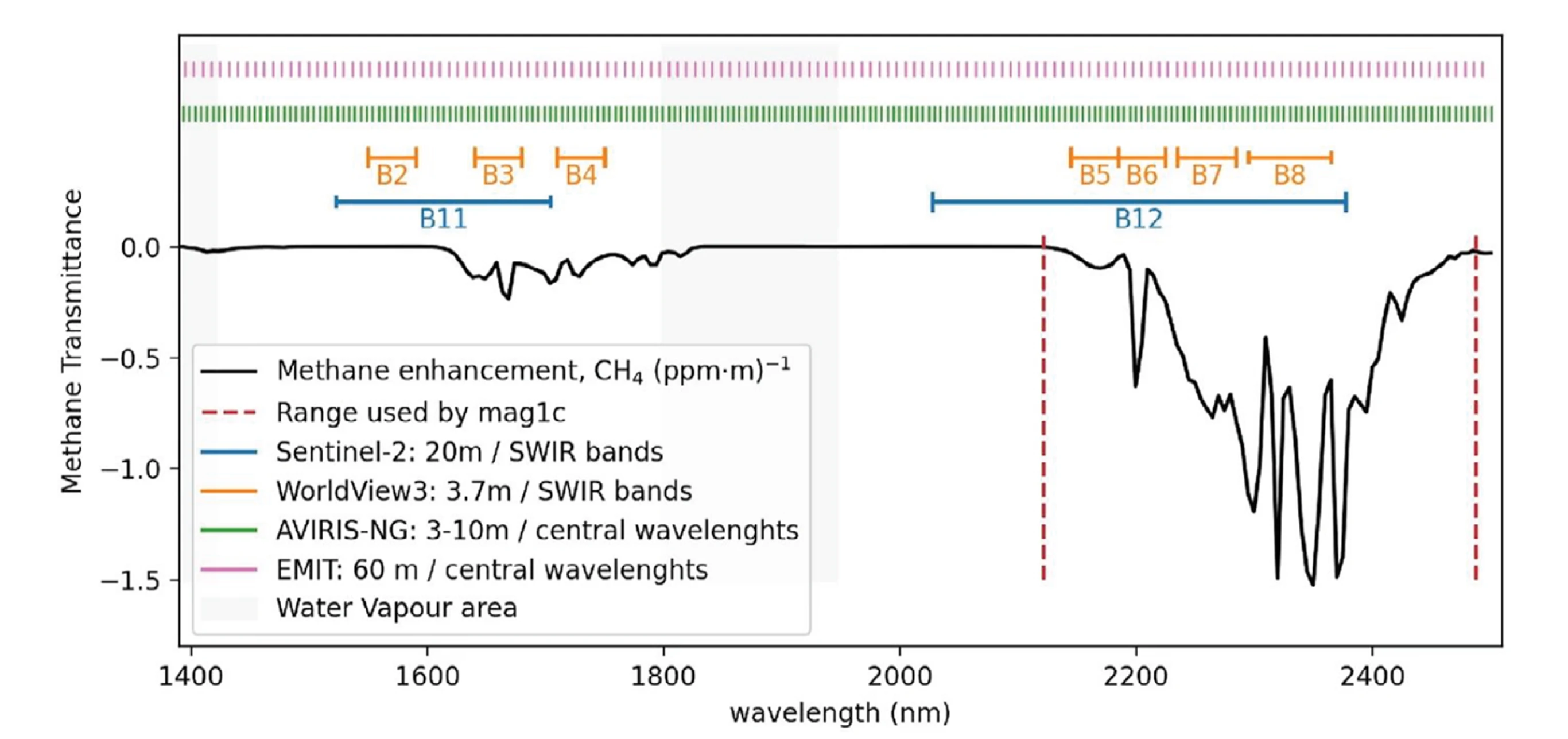

Current hyperspectral (beyond the visible light spectrum) data is available from a number of sources, such as the ESA Sentinel-2 satellite which captures 13 spectral bands primarily for forestry and land observation; the ESA WorldView-3 satellite, which combines an 8-band short wave sensor with a panchromatic camera collecting images at 31cm resolution with a ground swath of 13km; and the NASA EMIT (Earth Surface Mineral Dust Source Investigation) sensor, on the International Space Station that captures 285 light bands to identify surface minerals and emissions. These are enhanced by the NASA AVIRIS-NG (Airborne Visible InfraRed Imaging Spectrometer - Next Generation) project delivering sensitive 5nm spectroscopic imaging from numerous plane-based platforms.

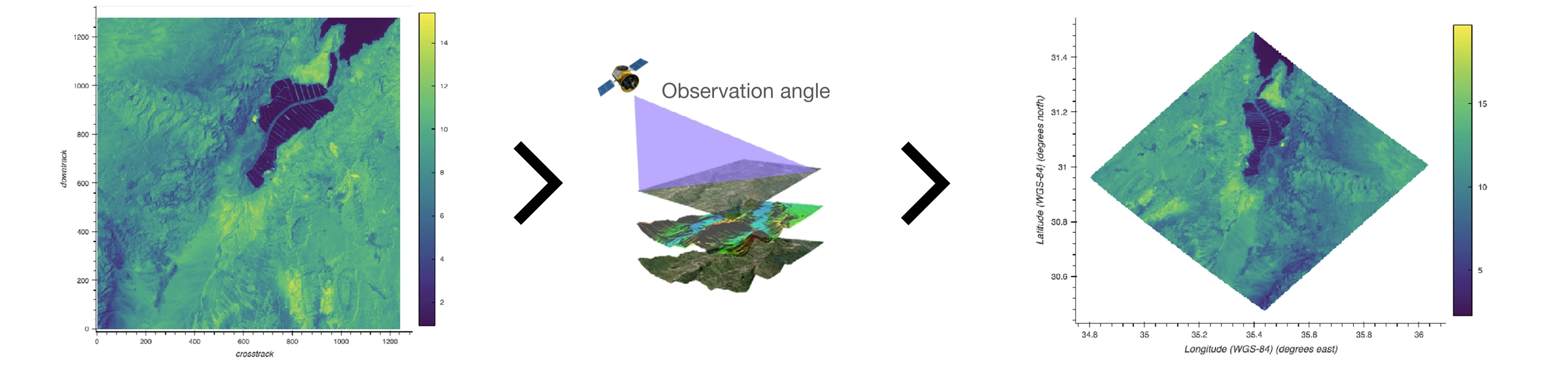

However, current methods involve the transmission of large images back to ground stations for processing. This is further compounded by the need for image processing techniques such as orthorectification that corrects geometric distortions caused by sensor viewing angle, terrain variation and the Earth’s curvature.

Examples of EMIT images - unorthorectified (left) and orthorectified (right)

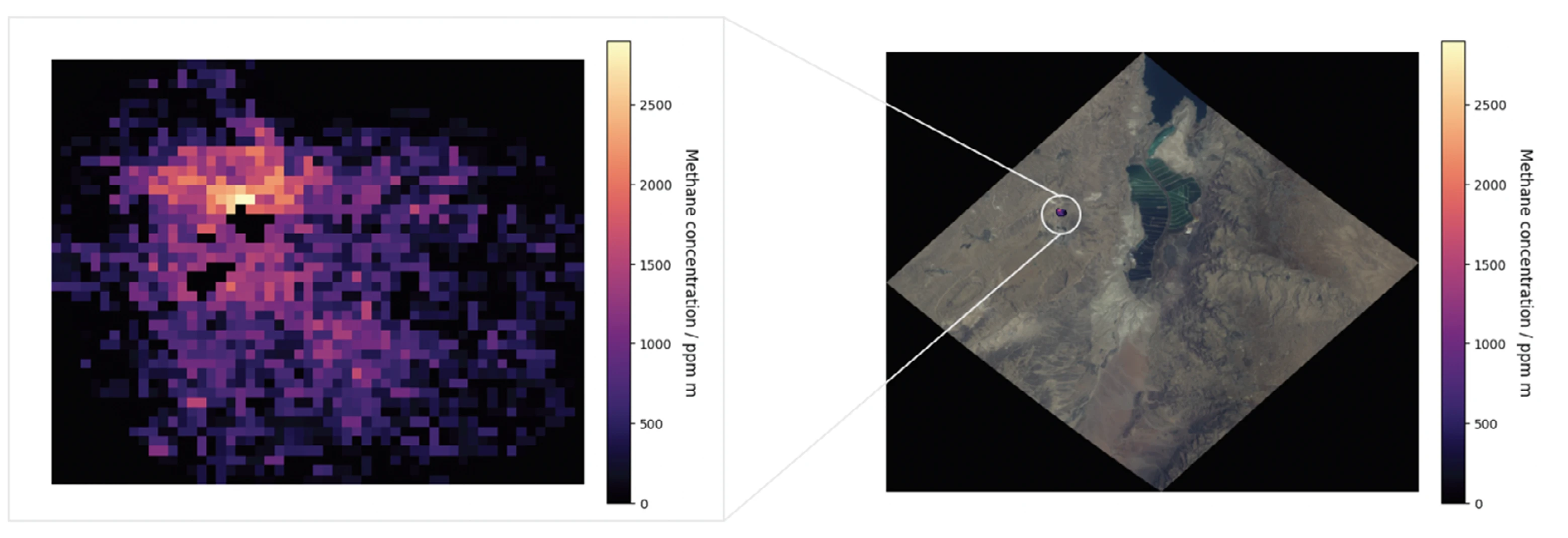

Once these images have been matched to a spectral filter to likely ascertain a methane plume, the resulting images are then individually identified and annotated by scientists, who take into account factors including cloud and water contamination, background noise and ground topology. These two steps take approximately a week, while subsequent review by three peers adds further delays before the data is published.

Identified plume (left) and projection onto orthorectified image (right)

To effectively mitigate methane emissions, a solution is needed that bypasses these lengthy delays and provides near real-time data.

Project Approach

Although onboard machine learning (ML) techniques have been applied to satellites previously, they have relied on the previous best-in-class identification methods - the mag1c algorithm and HyperSTARCOP model. Although they are useful for methane plume identification, they only perform well on synthetic datasets and remain limited when applied to unseen real-world data.

To overcome the limitations of these methods, the team selected data from the Sentinel-2, WorldView-3 and EMIT satellites, alongside the AVIRIS-NG project. Spectral data from 86 bands with ranges between 1,572 – 1,699nm and 2,004 – 2,478nm were chosen, to capture the methane absorption spectrum as well as bands alongside and visible RGB light. Including bands both sensitive and insensitive to methane enhances the detection of methane gas against the background.

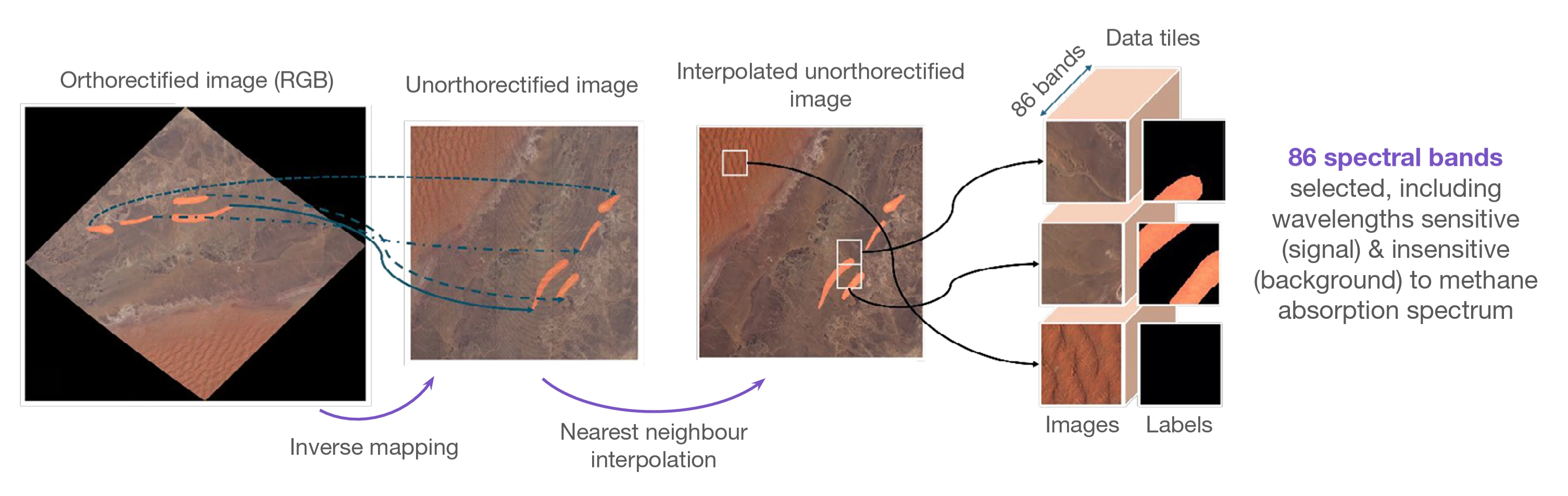

Traditionally, orthorectified data would be used with data labels available, however the team opted to generate novel unorthorectified data using an inverse mapping and interpolation method, which avoids unnecessary padding of images. In total, the orthorectified datasets contained 127,271 images of which 3,265 contained methane plumes. For the unorthorectified datasets there were 82,960 images with 2,595 methane affected ones.

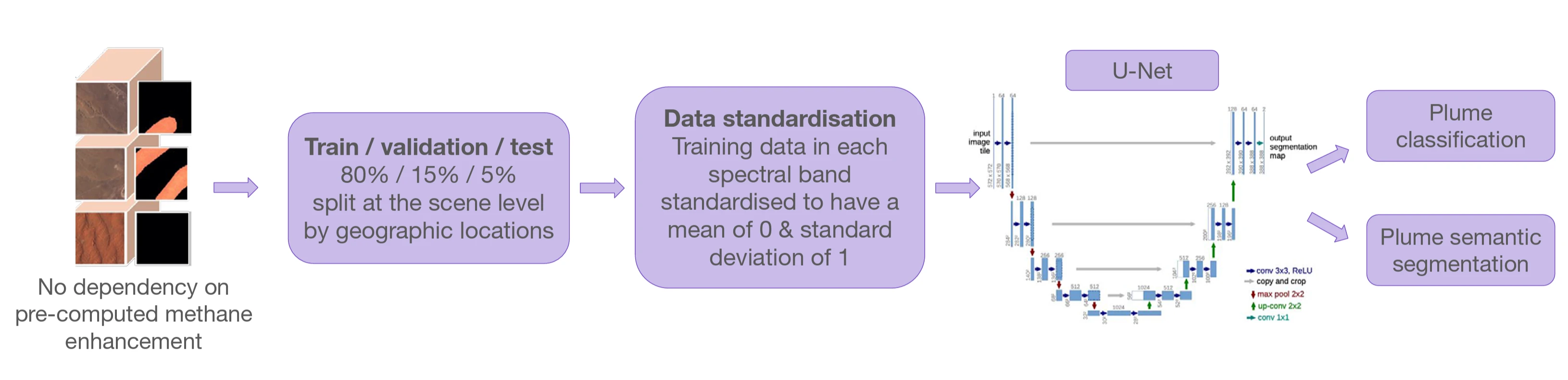

To assess performance of these two ML datasets, 80% of each satellite image data was allocated to a training set, with the remaining 15% and 5% divided as validation and testing subsets respectively. The splitting was performed at scene level to ensure each image was exclusively assigned to the training or validation dataset, avoiding data leakage. Additionally, the splitting was geographically stratified to guarantee spatial coverage in both sets, addressing emissions in different regions and on differing dates. A U-Net training model was particularly suited to such logical segmentation tasks due to its encoder-decoder architecture, which enables precise pixel-level localisation with high accuracy, whilst preserving contextual information.

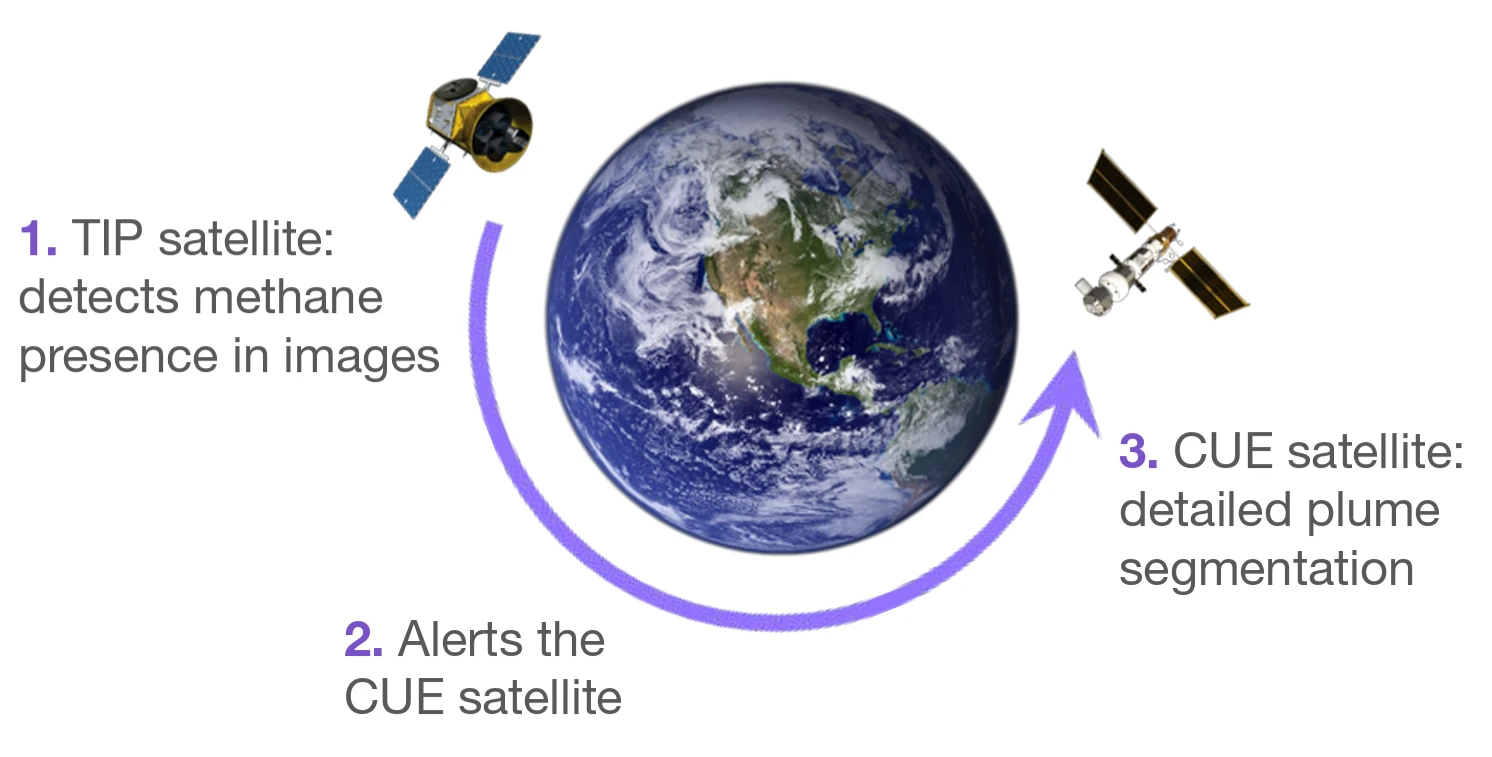

The team then proposed a novel dual satellite system - where both have the same orbit and the same spatial resolution. The Tip satellite, equipped with a vision transformer (ViT) model, is designed for fast and accurate plume classification, scanning broad areas and identifying specific image tiles that contain potential methane plumes. Upon detection, it sends an alert to the Cue satellite, featuring a more sophisticated U-Net architecture, which steers its camera to the target location to perform a detailed plume segmentation and predict the concentration of the methane.

This dual-satellite approach would eliminate the need to transmit large image files back to a ground station for post-processing and peer review, significantly reducing the time from detection to data delivery.

AI model development and training were carried out on a Scan Cloud GPU-accelerated server and Google Cloud Platform GPU instances. Experiments simulating onboard analytics were done using an AMD PYNG-Z2 FPGA development board, as this is typical of the technology installed on active satellites, due to its radiation tolerance.

Project Results

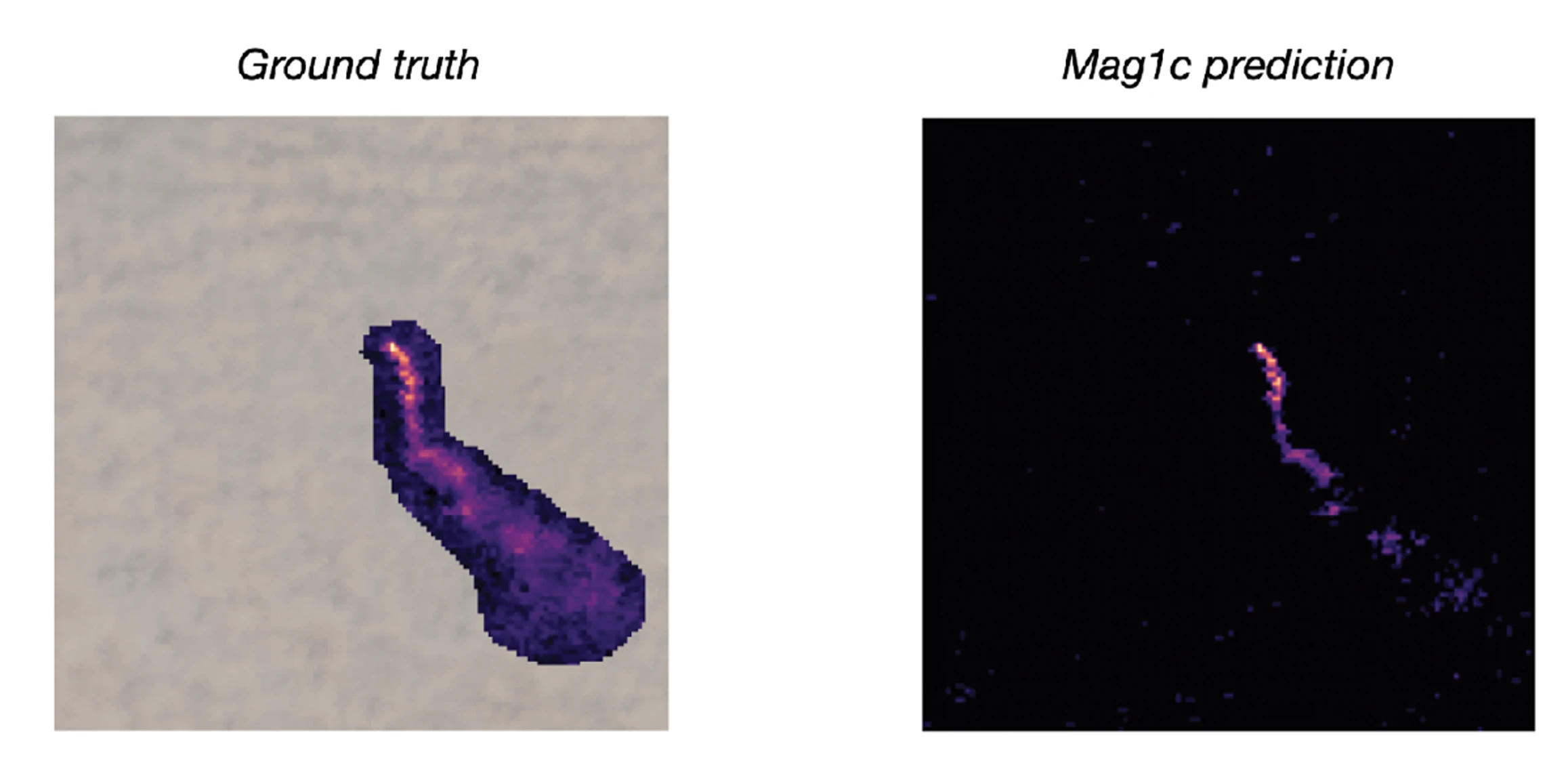

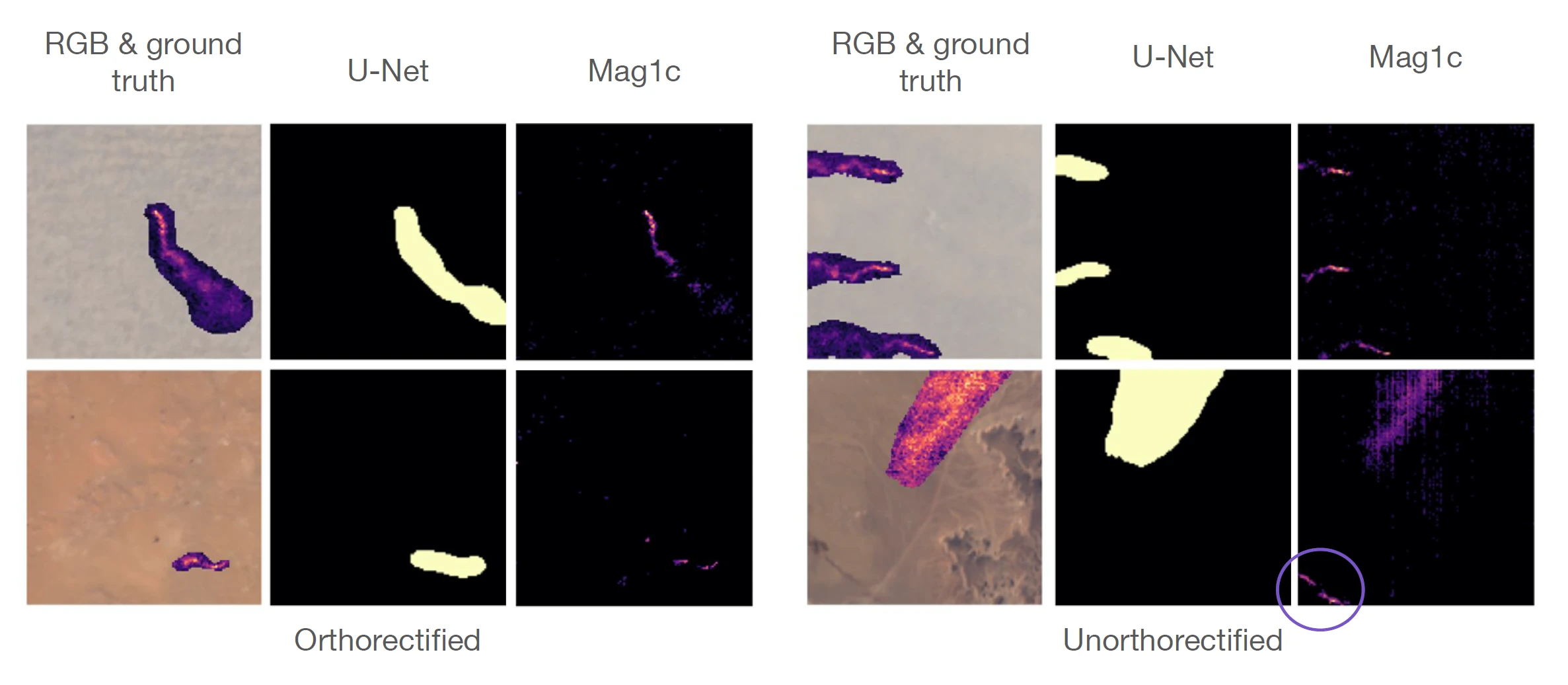

The models demonstrated promising results. The compressed ViT model onboard the Tip satellite was able to detect plumes in just 5-10 milliseconds proving the proposed system could quickly alert the Cue satellite to perform more detailed data collection. Additionally, the performance of the U-Net models trained on orthorectified and unorthorectified datasets were consistent, although image classification was much better with the orthorectified dataset. This is potentially attributable to the different spatial alignment and image quality. Both significantly outperform the mag1c model baseline as illustrated in the images below.

The U-Net models outperformed mag1c by 288% on all plumes and 536% on strong plumes, classified by a maximum methane enhancement value of ≥ 900 ppm m (parts per million metre). Furthermore, mag1c frequently mis-classifies reflective land features as methane plumes, leading to false positives (circled above).

Conclusions

This research provides a framework for deploying AI models directly on spacecraft, a task that requires careful consideration of limited computational, memory and energy resources. The team has developed and released two ML ready datasets, one orthorectified and one unorthorectified - to accelerate future research in this area, with the latter being particularly optimised for onboard processing as it eliminates a time-consuming correction step.

The proposed system would be useful to end-users such as the UN International Methane Emissions Observatory. Furthermore, its accuracy could be enhanced with more Earth observation data, including that from the upcoming ESA CHIME (Copernicus Hyperspectral Imaging Mission for the Environment) satellite, which when launched in 2028, will carry a unique visible to shortwave infrared spectrometer.

You can learn more about Earth Systems Lab 2025 research and this STARCOP 2.0: Atmospheric Anomaly Detection Onboard project by reading the ESL 2025 RESULTS BOOKLET, where a summary, poster and full technical memorandum can be viewed and downloaded.

The Scan Partnership

Scan is a major supporter of ESL 2025 and FDL , building on its participation in the previous five years events. As an NVIDIA Elite Solution Provider, Scan contributes multiple DGX supercomputers via Scan Cloud, in order to facilitate much of the machine learning and deep learning development and training required during the research sprint period.

Project Wins

Successful demonstration of improved methane plume detection using multiple satellite datasets

Generation of two new onboard datasets that can be used onboard for future work to reduce detection delays

Successful prototyping of onboard capability and edge-computing deployments

James Parr

Founder, FDL / CEO, Trillium Technologies

"FDL has established an impressive success rate for applied AI research output at an exceptional pace. Research outcomes are regularly accepted to respected journals, presented at scientific conferences and have been deployed on NASA and ESA initiatives - and in space."

Glyn Merga

Head of Cloud Architecture, Scan

"We are proud to be continuing our work with FDL and NVIDIA to support the ESL 2025 event for the sixth year running. It is a huge privilege to be associated with such ground-breaking research efforts in light of the challenges we all face when it comes to life-changing events like climate change and extreme weather."

Speak to an expert

You’ve seen how Scan continues to help the Earth Systems Lab and FDL further its research into the climate change and space. Contact our expert AI team to discuss your project requirements.

phone_iphone Phone: 01204 474210

mail Email: [email protected]