PRESSZONE

NVIDIA HIGHLIGHTS OF THE GTC EVENT 2026

NVIDIA kicked off this year's GTC event in San Jose with a slew of exciting new hardware and software announcements. Here are the essential announcements to keep you up to date with everything from the leader in accelerated computing.

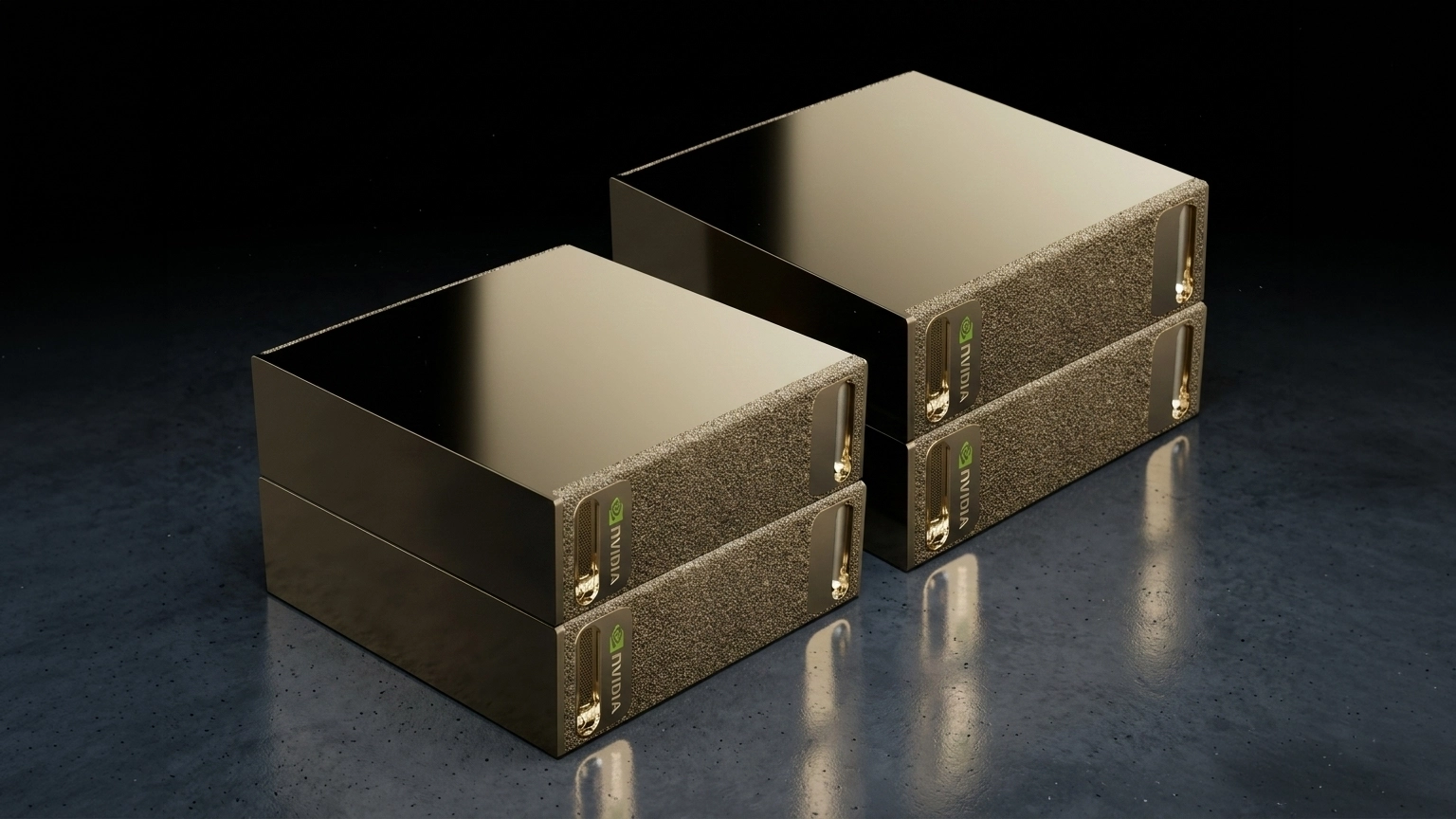

DGX Station GB300

First teased at GTC 2025, NVIDIA announced that the new generation DGX Station GB300 will be shipping in the next few months. This new system is designed for power users and teams for up to seven users for even more demanding AI and data science projects than the DGX Spark, bridging the gap between it and the datacentre-scale DGX appliances.

Powered by a SOC equipped with a Blackwell Ultra GPU and Arm CPU, the DGX Station GB300 delivers an unprecedented 20 PetaFLOPS of performance and has 748GB of coherent memory for running huge datasets.

Customisable OEM versions of the DGX Station GB300 from brands such as Asus will be available from Scan shortly.

DLSS 5 Game Rendering

NVIDIA also revealed a new generation of its AI-enhanced rendering technology for games. The fifth iteration of DLSS uses an AI model that has been trained to understand complex characteristics such as faces, hair, fabric and skin, along with environmental lighting conditions, to generate new frames based on the game's original art assets.

While DLSS 5 isn't due for release until autumn 2026, triple A game publishers have already announced support for the new tech in their titles, including Bethesda, CAPCOM, Hotta Studio, NetEase, NCSOFT, S-GAME, Tencent, Ubisoft and Warner Bros.

In the meantime, check out the existing range of NVIDIA GEFORCE 50-SERIES GPUS best placed to run DLSS 5 when it arrives.

Four-Way DGX Spark

Since its launch last autumn, the diminutive DGX Spark has proven to be an extremely popular device, providing an affordable entry-point for developing AI models due to its powerful GB10 SoC and 128GB of memory.

At GTC, NVIDIA announced that you can link together up to four Sparks together in a cluster, effectively providing a single huge 512GB memory pool for AI models of over 800 billion parameters. Another great reason to start your AI journey with the DGX Spark.

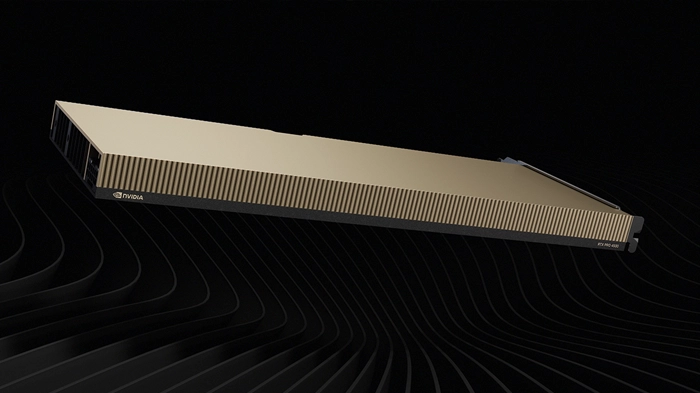

RTX PRO 4500 Blackwell Server Edition

This upcoming card expands the range of passively-cooled server GPUs for AI and visualisation workloads.

The RTX PRO 4500 Blackwell packs in 10,496 CUDA cores, 328 5th gen Tensor cores and 82 4th gen RT cores, roughly half that of the RTX PRO 6000 Blackwell, and is equipped with 32GB of ultra-reliable GDDR7 ECC memory. With a TDP of 165W and a single-slot design, the new card will allow some very high-density configurations.

The new GPU will be available shortly in EGX, MGX and RTX PRO servers from Scan.

BlueField-4 STX Storage Architecture

This is a new storage architecture, building on the Inference Context Memory Storage Platform announced at CES earlier this year.

Specially designed for the contextual-centric nature of agentic AI models, the new STX storage architecture boosts the token rate by as much as 5x, while delivering 4x greater energy efficiency versus CPU-powered storage systems.

STX-based storage systems from Scan partners such as DDN, Dell, NetApp, VAST and WEKA will be available in the second half of 2026.

Agentic AI Vera CPU

NVIDIA also revealed more details about its forthcoming Vera CPU. Specially designed for agentic AI workloads, Vera features 88 Arm-based Olympus cores and is the first CPU to support FP8 precision.

It also features NVIDIA NVLink-C2C to connect with next-generation Rubin GPUs, with seven times the bandwidth of PCIe 6.

Vera CPUs will be at the heart of many next-generation AI appliances based on multiple MGX systems available from Scan later this year.

Groq 3 LPX LPUs

NVIDIA also used GTC to reveal that it'll be adding LPUs to its product line-up for the first time.

LPUs (Language Processing Units) have been around since 2016 from Groq (not to be confused with Grok), and differ from GPUs in being specifically designed for inferencing AI models. NVIDIA has licensed the core technology from Groq.

Next-gen systems, built using Vera CPUs and Rubin GPUs, will feature dedicated Groq 3 LPX LPUs, accelerating agentic AI inferencing, and will be available later this year from Scan.

NemoClaw plugin for OpenClaw

NemoClaw is a new software plugin for the popular AI agent OpenClaw.

NemoClaw enables you to develop and run agents more securely, with policy-based privacy and security guardrails, giving users control over how agents behave and handle data.

NemoClaw isn't just for enterprise AI on DGX systems, it's also compatible with GeForce RTX PCs, and can be run locally or in the cloud.

Download and try a demo version of NEMOCLAW.