Turnkey Solution Providers

Edge inferencing solutions bring the power of AI directly to where AI models are deployed - whether that’s a manufacturing line, a retail store, a smart camera, or an autonomous robot. These environments require low-latency, local network solutions.

Our AI inferencing solutions cover a complete spectrum: from turnkey edge appliances for vision and sensor analytics, to compact yet powerful Jetson-based boards for robotics and automation, all supported by our software integration services and managed edge infrastructure solutions.

Whether you’re prototyping a custom solution or rolling out hundreds of devices across the country for integration with core services, our team have everything you need to design, deploy, and integrate inferenced AI models at the edge.

Turnkey Appliances to Custom Hardware

SCAN can deploy turnkey edge appliances from our industry-leading partners EuroTech and Advantech, or build completely bespoke solutions through our own 3XS build team and integrate NVIDIA Jetson GPUs into customised chassis.

Whether it’s defect detection models in factories, crowd analytics in smart cities, environmental sensing in remote locations, or 3D-assisted surgery in hospitals, our edge AI solutions accelerate your journey from concept to business impact.

NVIDIA Jetson - What will you build with yours?

| Jetson AGX Thor | Jetson AGX Orin | Jetson Orin NX | Jetson Orin Nano | Jetson AGX Xavier | Jetson Xavier NX | Jetson TX2 | |

|---|---|---|---|---|---|---|---|

| GPU | Blackwell | Ampere | Ampere | Ampere | Volta | Volta | Pascal |

| CUDA CORES | 2,560 | 2,048 | 1,204 | 512 / 1,024 | 512 | 384 | 256 |

| TENSOR CORES | 96 | 64 | 32 | 16 / 32 | 64 | 48 | 0 |

| MEMORY | 64 / 128GB | 32 / 64GB | 8 / 16GB | 4 / 8GB | 32 / 64GB | 8 / 16GB | 4 / 8GB |

| PERFORMANCE | 2,070 TeraFLOPs | 275 TOPS | 100 TOPS | 40 TOPS | 32 TOPS | 21 TOPS | 1.33 TeraFLOPs |

| TDP | 40 - 130W | 15 - 60W | 10 - 25W | 5 - 15W | 10 - 40W | 10 - 20W | 7.5 - 20W |

| DIMENSIONS | 100 x 87mm | 100 x 87mm | 70 x 45mm | 70 x 45mm | 100 x 87mm | 70 x 45mm | 87 x 50mm |

Managed SD-WAN Services

Our managed SD-WAN service provides intelligent, software-defined network routing across your networked devices, optimising traffic paths for AI workloads while ensuring predictable performance even across diverse or remote locations. By prioritising critical AI inferencing data and dynamically adjusting to changing network conditions, SD-WAN ensures real-time applications remain responsive and stable.

Whether you’re deploying video analytics across retail sites, robotics controls in manufacturing, or environmental monitoring in distributed assets, we ensure your edge AI solutions remain continuously connected and optimised to drive business outcomes.

System Integration Services

Deploying edge AI and IoT solutions is only part of the journey – integrating them into existing systems is where true business value is unlocked. We bridge that gap, ensuring that real-time data flows into your operational applications and data sets.

Through developing build robust APIs, middleware, and data pipelines that connect edge inferencing results with software and cloud platforms, we create a unified digital ecosystem.

Beyond integration, we build business intelligence tools and map data to your KPIs and workflows. We help you gain live visibility into operations, enabling faster decisions, proactive interventions, and data-driven strategic planning.

From production line metrics, predictive maintenance alerts, or customer behavioural analytics, our edge development and integration services ensure your edge data is driving business decisions.

Edge Solutions for Every Sector

Wherever there is data, there is AI. Turn data into action across any industry application.

Insights from Dashboard Data

Utilise LLMs to query vast amounts of production data from vast Internet of Things (IoT) devices and networks

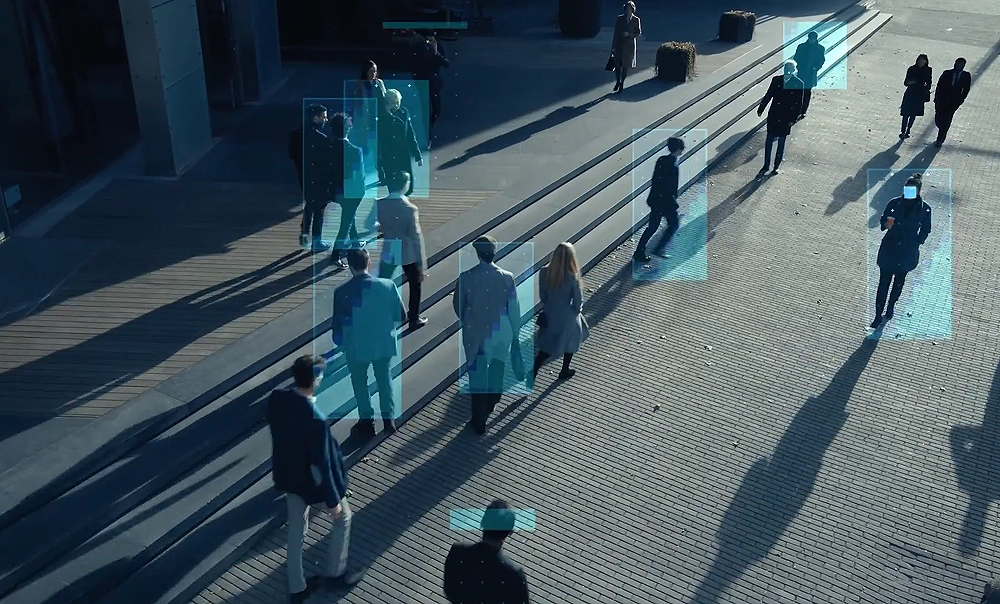

Stand out from the Crowd

Analyse object data to enhance visitor experiences, improve security, and help optimise revenue generation.

Safety First

Utilise safeguards to protect environments with people, property, business communities and critical infrastructure.

Use Energy Smarter

Improve sustainability by utilising smart lighting solutions to meet green energy targets and personalise energy requirements

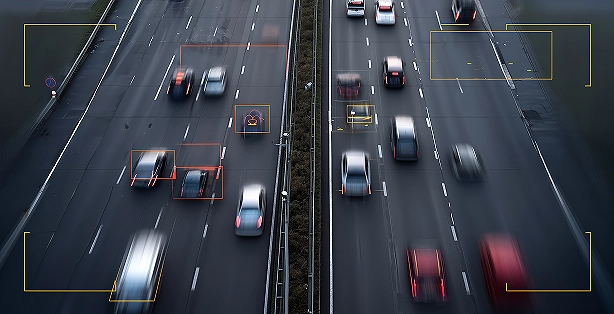

Beat the Traffic

Develop patterns in data pipelines to assess the impact of downstream operations based on trigger events and volumetric flow

See what can’t be seen

Use cameras to build AR and VR solutions to aid decision making, improve training and assist in real-time scenarios

What's your use case?

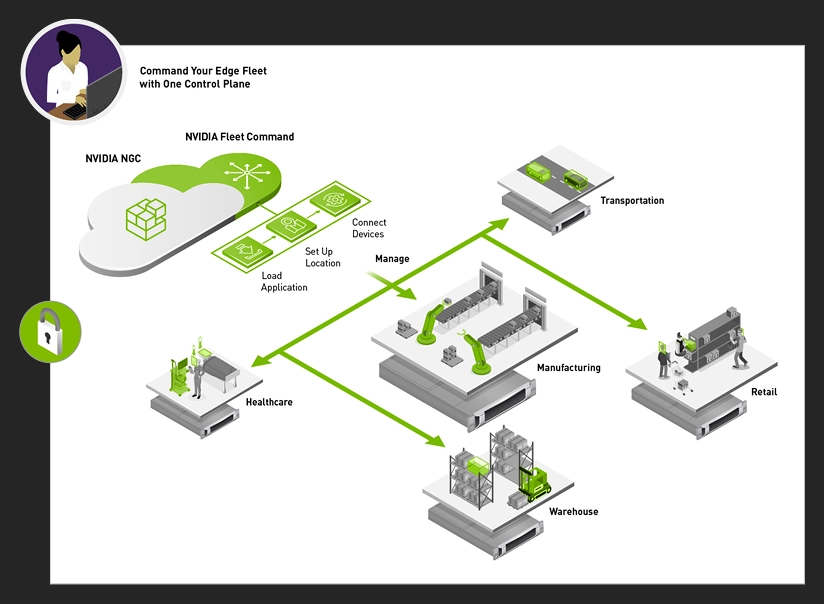

Managing Inferencing Deployments

NVIDIA Fleet Command is a cloud service that securely deploys, manages, and scales AI applications across distributed edge infrastructure. It simplifies deployments and centralises management of Jetson-based edge AI devices through a cloud service. After a system is installed, the end-to-end lifecycle can be managed by Fleet Command, making it easy to provision, update, and monitor AI at remote locations.

Inferencing Solutions Explained

Learn more about Inferencing solutions and how NVIDIA Jetson is the world's leading platform for embedded applications, comprising small form-factor, high-performance GPU modules, NVIDIA JetPack Software Development Kit (SDK) and an ecosystem comprised of sensors, services and third-party products to speed up development.

Inferencing Solutions that Drive Action

Partnering with SCAN to design, deploy, integrate and manage your inferenced AI solutions means choosing an NVIDIA-certified Managed Service Provider and Elite NVIDIA Partner to be at the heart of your AI business solutions.

Our AI ecosystem has been built to walk customers through their journey of developing, training, and deploying AI models that accelerate business outcomes by capturing, processing, and transforming data into action

The first step towards building autonomous agents that provide meaningful business value is deploying AI models that create actionable insights - see how we can help your research or business achieve those goals