Scan is excited to be heading back to NVIDIA GTC 2026, joining the global community of innovators shaping the future of AI, accelerated computing, and advanced visualisation. Alongside showcasing our latest AI‑ready solutions, we’ll also be hosting a special Scan Springboard Social in San Jose - an evening of drinks, food, and relaxed networking with industry friends. Taking place on 19 March 2026 at EOS & NYX, 201 S. Second St. Ste. 120, San Jose, it’s the perfect opportunity to connect, share ideas, and unwind during GTC week, with more agenda details coming soon.

BOOK A MEETING AT GTCNVIDIA GTC 2026 Keynote

Watch NVIDIA Founder and CEO Jensen Huang’s GTC keynote as he unveils the latest breakthroughs in AI and accelerated computing.

See how agentic AI, AI factories, and physical AI are powering the next generation of intelligent systems.

Scan at GTC 2026

Watch our highlight videos below for the latest announcements from NVIDIA

Join Scan at NVIDIA GTC 2026 — Plus Our Springboard Social Event!

NVIDIA GTC 2026 - Jensen Huang's Keynote Highlights

GTC 2026: DAY 1 - The Scan Team React to the Keynote

GTC 2026: DAY 2 - We Take you on a Tour of the Exhibition Hall

Why join the Scan Springboard UK community?

Scan Springboard UK is free to join. It is a selective, opt-in community for developers, entrepreneurs, and early-stage businesses across the UK working in AI. Backed by Scan, NVIDIA and Supermicro, our programme includes:

Peer-Driven Platform for Strategic Conversations

Connect with fellow founders to exchange insights, tackle challenges, and grow through shared experience.

Funding Connections

Facilitate access to venture capitalists, angels, and funders.

Annual Pitch Event

Showcase members at an exclusive Annual Pitch Event.

Cross-Industry Partnerships

Enable vertical collaboration across industries and technologies.

UK Events

Host quarterly in-person events across the UK to foster regional centres for innovation.

Go-to-Market Support

Help take solutions to market through our customers and commercial teams.

Be the First to Know

Discover the next-gen GPUs and AI appliances below; make sure to register your interest to be the first in the queue when more information is available.

DGX Station GB300

First teased at GTC 2025, NVIDIA announced that the new generation DGX Station GB300 will be shipping in the next few months. This new system is designed for power users and teams for up to seven users for even more demanding AI and data science projects than the DGX Spark, bridging the gap between it and the datacentre-scale DGX appliances.

Powered by a SOC equipped with a Blackwell Ultra GPU and Arm CPU, the DGX Station GB300 delivers an unprecedented 20 PetaFLOPS of performance and has 748GB of coherent memory for running huge datasets.

Customisable OEM versions of the DGX Station GB300 from brands such as Asus will be available from Scan shortly.

FIND OUT MORE

DLSS 5 Game Rendering

NVIDIA also revealed a new generation of its AI-enhanced rendering technology for games. The fifth iteration of DLSS uses an AI model that has been trained to understand complex characteristics such as faces, hair, fabric and skin, along with environmental lighting conditions, to generate new frames based on the games original art assets.

While DLSS 5 isn't due for release until autumn 2026, triple A game publishers have already announced support for the new tech in their titles, including Bethesda, CAPCOM, Hotta Studio, NetEase, NCSOFT, S-GAME, Tencent, Ubisoft and Warner Bros.

In the meantime, check out the existing range of NVIDIA GeForce 50-Series GPUs best placed to run DLSS 5 when it arrives.

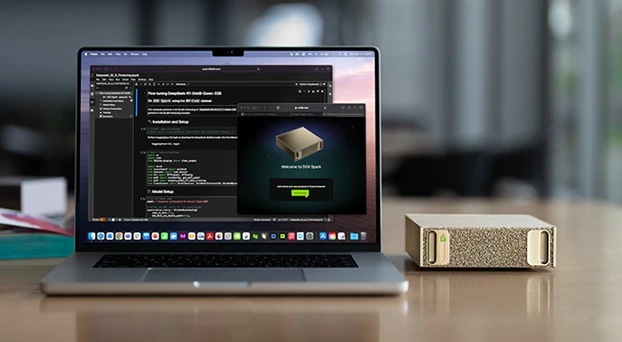

Four-Way DGX Spark

Since its launch last autumn, the diminutive DGX Spark has proven to be an extremely popular device, providing an affordable entry-point for developing AI models due to its powerful GB10 SoC and 128GB of memory.

At GTC, NVIDIA announced that you can link together up to four Sparks together in a cluster, effectively providing a single huge 512GB memory pool for AI models of over 800 billion parameters. Another great reason to start your AI journey with the DGX Spark.

FIND OUT MORE

RTX PRO 4500 Blackwell Server Edition

This upcoming card expands the range of passively-cooled server GPUs for AI and visualisation workloads.

The RTX PRO 4500 Blackwell packs in 10,496 CUDA cores, 328 5th gen Tensor cores and 82 4th gen RT cores, roughly half that of the RTX PRO 6000 Blackwell, and is equipped with 32GB of ultra-reliable GDDR7 ECC memory. With a TDP of 165W and a single-slot design, the new card will allow some very high-density configurations.

The new GPU will be available shortly in EGX, MGX and RTX PRO servers from Scan.

FIND OUT MORE

BlueField-4 STX Storage Architecture

This is a new storage architecture, building on the Inference Context Memory Storage Platform announced at CES earlier this year.

Specially designed for the contextual-centric nature of agentic AI models, the new STX storage architecture boosts the token rate by as much as 5x, while delivering 4x greater energy efficiency versus CPU-powered storage systems.

STX-based storage systems from Scan partners such as DDN, Dell, NetApp, VAST and WEKA will be available in the second half of 2026.

Agentic AI Vera CPU

NVIDIA also revealed more details about its forthcoming Vera CPU. Specially designed for agentic AI workloads, Vera features 88 Arm-based Olympus cores and is the first CPU to support FP8 precision.

It also features NVIDIA NVLink-C2C to connect with next-generation Rubin GPUs, with seven times the bandwidth of PCIe 6.

Vera CPUs will be at the heart of many next-generation AI appliances based on multiple MGX systems available from Scan later this year.

Groq 3 LPX LPUs

NVIDIA also used GTC to reveal that it'll be adding LPUs to its product line-up for the first time.

LPUs (Language Processing Units) have been around since 2016 from Groq (not to be confused with Grok), and differ from GPUs in being specifically designed for inferencing AI models. NVIDIA has licensed the core technology from Groq.

Next-gen systems, built using Vera CPUs and Rubin GPUs, will feature dedicated Groq 3 LPX LPUs, accelerating agentic AI inferencing, and will be available later this year from Scan.

NemoClaw Plugin for OpenClaw

NemoClaw is a new software plugin for the popular AI agent OpenClaw.

NemoClaw enables you to develop and run agents more securely, with policy-based privacy and security guardrails, giving users control over how agents behave and handle data.

NemoClaw isn't just for enterprise AI on DGX systems, it's also compatible with GeForce RTX PCs, and can be run locally or in the cloud.

DOWNLOAD NEMOCLAWNVIDIA GTC 2025 Keynote

Catch all the key highlights from NVIDIA CEO Jensen Huang's GTC 2025 keynote, where the latest innovations in AI, robotics, and computing took centre stage.

Key announcements include Blackwell RTX PRO, DGX Station GB300, DGX Spark and even innovations in physical AI and Robotics

Scan at GTC 2025

Watch our highlight videos below for the latest announcements from NVIDIA

Day 1 Highlights

Day 2 Highlights

Day 3 Highlights

Day 4 Highlights

Be the First to Know

Discover the next-gen GPUs and AI appliances below; make sure to register your interest to be the first in the queue when more information is available.

RTX PRO Blackwell Workstation GPUs

The latest generation of workstation GPUs for artists, architects, engineers and data scientists. Based on the Blackwell architecture, these new GPUs deliver unparalleled AI performance, up to 3x faster than Ada Lovelace GPUs and support for FP4. Blackwell GPUs are also incredible for ray tracing, rendering up to 2x faster than Ada Lovelace GPUs. Multiple models are available, including the RTX 6000 with a massive 96GB memory, plus the 5000, 4500 and 4000.

RTX Pro Blackwell GPUs are available in 2-, 3- and 4-way configurations in workstations from Scan 3XS Systems.

FIND OUT MORE

DGX Station GB300

The new DGX Station provides datacentre-level performance in a desktop device. This makes it the ultimate appliance for AI developers, researchers and data science teams. Powered by the GB300 Superchip, which combines 72 powerful ARM CPU cores and a Blackwell Ultra GPU, served by a huge 784GB of coherent memory.

As an AI appliance, the DGX Station is preinstalled with DGX Base OS and the full NVIDIA AI software stack enabling, speeding up AI model development.

FIND OUT MORE

DGX Spark

The growing size and complexity of generative AI models makes development very challenging for traditional AI workstations. The DGX Spark solves this problem, providing up to 1,000 TOPS of AI performance via its Grace GB10 Superchip in a compact desktop device. This combines 20 powerful ARM CPU cores with a Blackwell GPU, served by 128GB of unified memory.

As an AI appliance, the DGX Spark is preinstalled with DGX Base OS and the full NVIDIA AI software stack, speeding up AI model development.

FIND OUT MORE

RTX PRO Blackwell Server GPU

The latest generation of server GPUs for visual and AI workloads. Based on the Blackwell architecture, this new GPU delivers unparalleled AI performance, up to 3x faster than Ada Lovelace GPUs and support for FP4. Blackwell GPUs are also incredible for ray tracing, rendering up to 2x faster than Ada Lovelace GPUs.

The RTX PRO 6000 Blackwell Server Edition GPU with a massive 96GB of memory is available in multiple configurations in NVIDIA EGX servers from Scan 3XS Systems.

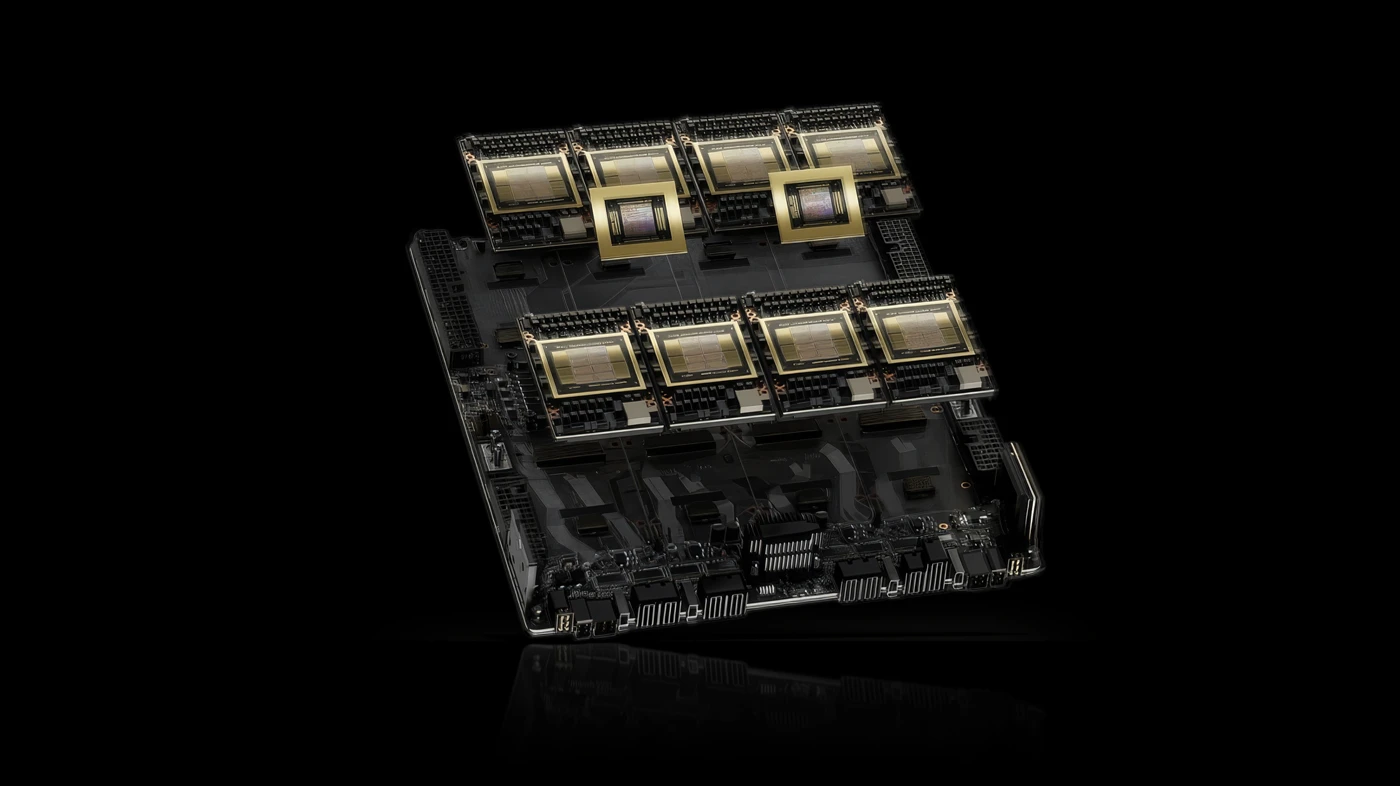

Blackwell coming to HGX servers

The next generation of NVIDIA HGX servers will also be upgraded to the Blackwell architecture.

The base configuration, the HGX B200, includes 8 Blackwell GPUs. There will also be a more powerful configuration, the HGX B300 NVL16, with 16 Blackwell Ultra GPUs.

Both configurations are now available from Scan.”

NVIDIA GB300 NVL72 for AI factories

A turnkey AI factory, providing the ultimate in performance for agentic AI and generative AI projects.

Powered by the GB300 NVL72 combines 36 nodes, each with a pair of Blackwell Ultra GPUs and a Grace CPU, working together as unified AI platform. GB300 NVL72 delivers 50x higher performance than previous generation Hopper systems.

The GB300 NVL72 will be available from the second half of 2025 from Scan.

Silicon Photonics Switches

AI factories powered by hundreds of GPUs require more efficient networking than traditional fibre connections. Silicon photonics removes the need for transceivers, slashing power usage by 3.5x while delivering 63x greater signal integrity and 10x better resiliency.

NVIDIA Quantum-X Photonics InfiniBand switches are expected to be available later this year from Scan, with NVIDIA Spectrum-X Photonics Ethernet switches coming in 2026.

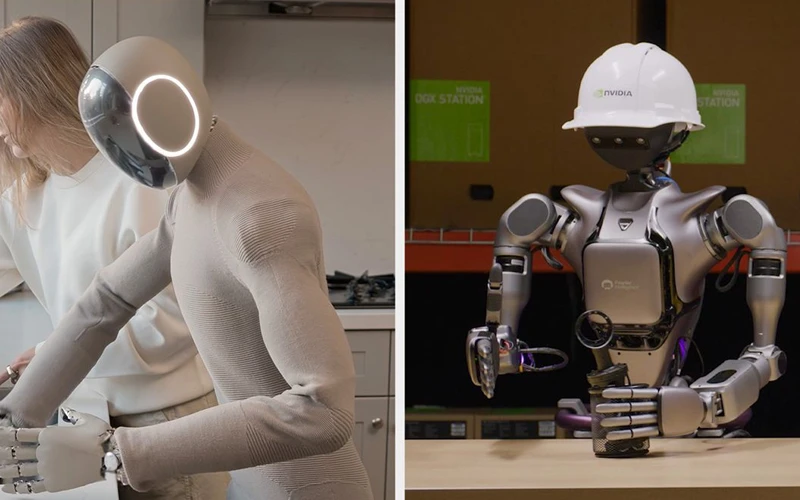

Isaac GR00T N1 & Newton Robotics

NVIDIA announced new software to improve robot development and simulation.

Isaac GROOT N1 is a foundational model for humanoid robots, with two modes: System 1 fast-thinking actions, mirroring human reflexes and intuition and System 2 slow-thinking actions for methodical decision-making.

This is enhanced by Newton, a new physics engine developed in partnership with Disney and Google.

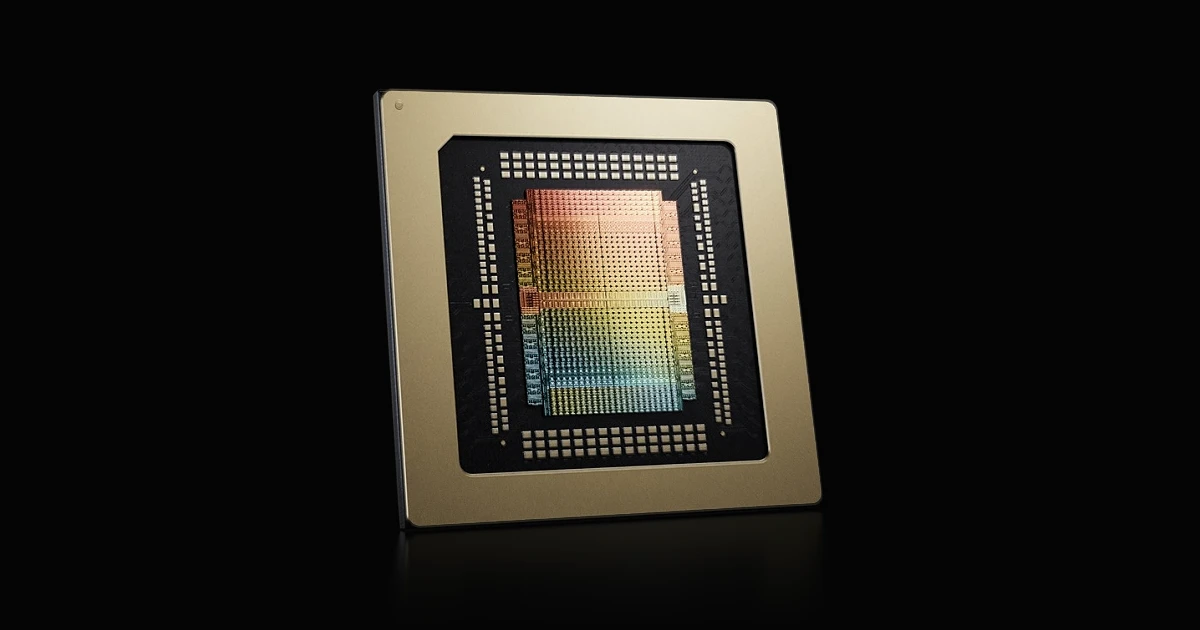

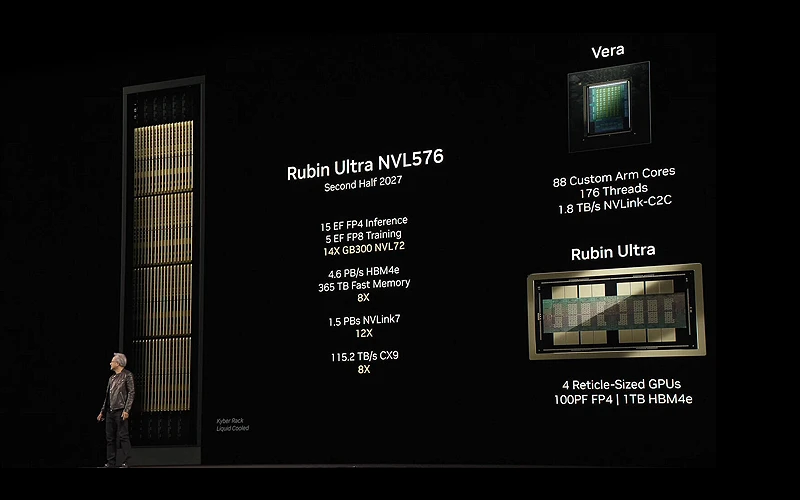

Next-Gen GPUs & CPUs Revealed

NVIDIA also teased the future generation GPU and CPU architectures coming down the line in the next few years.

The next GPU is codenamed Rubin, replacing Blackwell will include multiple GPUs in a single package and up to 1TB of HBM4e memory. Delivering up to 100 petaFLOPS of FP4 performance, products based on Rubin are planned for 2027.

The next CPU is codenamed Vera, replacing Grace will include 88 custom Arm cores with SMT, for a total of 176 threads.

Following these, the next GPU, codenamed Feynman is planned for 2028.

The Scan AI team were at GTC showcasing a virtual walkthrough of Lilith, an XR Dance Experience powered by AI technology over the Scan Cloud platform. We also showcased a Specsavers retail store design using NVIDIA Omniverse. See all the highlights in our videos below and learn more about the latest NVIDIA announcements from Jensen's keynote.

NVIDIA GTC 2024 Keynote

Did you miss Jensen Huang, CEO of NVIDIA, give his Keynote this week? Don't worry - we've got you covered! Today we have condensed all of the highlights into one video for you.

Scan at GTC 2024

Watch our highlight videos below for the latest announcements from NVIDIA

Day 1 Highlights

Day 2 Highlights

Day 3 Highlights

Day 4 Highlights

GTC Interview

Be the First to Know

Discover the next-gen GPUs and AI appliances below; make sure to register your interest to be the first in the queue when more information is available.

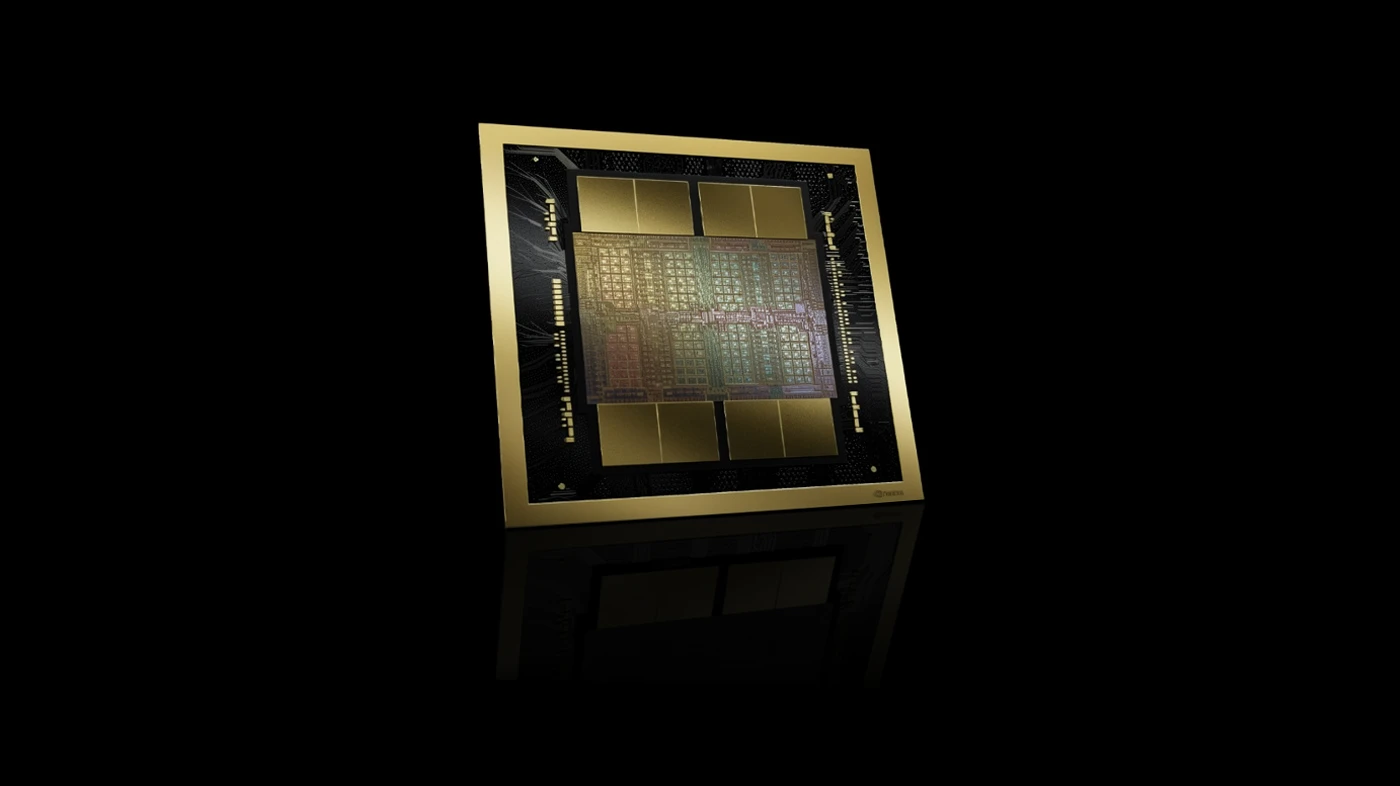

Blackwell GPU Architecture

The latest Blackwell GPU architecture has been designed for building and training generative AI models. Blackwell delivers up to 30x faster inferencing, 4x training and has 25x lower TCO than its predecessor Hopper. Multiple different Blackwell-based GPUs will be available from late 2024.

800Gb/s Networking

AI systems need rapid access to data so NVIDIA announced an ecosystem of 800Gb/s networking products, doubling the throughput of today’s fastest networks. Key products include Quantum-X800 InfiniBand and Spectrum-X800 Ethernet switches plus ConnectX-8 SmartNICs, planned for launch in late 2024.

DGX B200

The latest DGX AI appliance, the DGX B200 features eight B200 GPUs with a total of 1.44TB of GPU memory along with two Intel Xeon CPUs and 400GB/s networking. The DGX B200 is expected to launch in late 2024.

HGX B200 & B100

B200 and B100 GPUs will also be available in custom-built GPU servers based on the HGX platform. Expect up to 15x faster inferencing than their predecessors using H100 GPUs. Keep an eye out for these systems in late 2024.

DGX SuperPOD GB200

Delivering the ultimate in performance for LLMs and generative AI projects, SuperPODs feature 36 GB200 Superchips per rack, connected via 5th gen NVLink. Planned availability is late 2024.

NIM Microservices

NIM is a new collection of pre-built containers that massively speed up deploying generative AI projects. NIM is available exclusively via NVIDIA AI Enterprise, bundled as standard with NVIDIA DGX appliances and select GPUs, and also available as a standalone license.

cuOPT Microservice

The CuOPT microservice accelerates operations optimisation by enabling faster and better decisions in areas such as logistics and supply chains. CuOPT is available exclusively via NVIDIA AI Enterprise, bundled as standard with NVIDIA DGX appliances and select GPUs, and also available as a standalone license.

Omniverse Cloud APIs

A new collection of five APIs that extend the capabilities of Omniverse for creating digital twins and simulating autonomous machines such as robots and self-driving vehicles. Expect to see new applications from ISVs such as Ansys, Cadence, Dassault Systèmes, Hexagon, Microsoft, Rockwell Automation, Siemens and Trimble.

Project GROOT

A new general-purpose foundational model designed to drive breakthroughs in robotics and embedded AI. Project GROOT will be accelerated by the new Jetson Thor SOC based on the Blackwell architecture and Isaac framework.