Introduction

A wired network card is the means by which a desktop PC, workstation, server or storage appliance connects to a network switch and the wider network. In the home or home office environment, wireless connectivity is more common nowadays and you can learn more by reading our WIRELESS NETWORK CARD BUYERS GUIDE. However, in the business space, wired technology is usually the norm as wired networks offer better security, speeds and simplicity of management. This guide will look at the various types of wired network cards and where they are most commonly used.

Network Interface Card, or NIC, is a general catch-all term, however as we’ll see there are a few other common terms including SmartNIC, SuperNIC, DPU and IPU. We’ll get to those shortly; however, we’ll start by looking at the two standard network protocols.

Network Protocols

There are two main types of networking protocols in use today - Ethernet and InfiniBand. Both act to do the same job - transmitting data packets from one device on the network to another - however, they differ in the ways they do this and the associated resources they use to do it. You may occasionally come across older technologies such as Fibre Channel or Intel OmniPath, but due to development in the capabilities in Ethernet and InfiniBand, these have largely fallen out of favour.

Ethernet

Ethernet is the most common network protocol, delivering dependable networking since the early 1980s. In a corporate network, 1GbE has long been the standard, with faster standards being available.

| 1GbE |

1 |

0.12 |

| 2.5GbE |

2.5 |

0.31 |

| 5GbE |

5 |

0.62 |

| 10GbE |

10 |

1.25 |

| 25GbE |

25 |

2.5 |

| 40GbE |

40 |

5 |

| 50GbE |

50 |

6.25 |

| 100GbE |

100 |

12.5 |

| 200GbE |

200 |

25 |

| 400GbE |

400 |

50 |

| 800GbE |

800 |

100 |

InfiniBand

InfiniBand is an alternative protocol to Ethernet, usually found in AI and HPC applications where high bandwidth and low latency are key requirements. InfiniBand achieves improved throughput by bypassing the server CPU to control data transmission.

| SDR |

8 |

1 |

| DDR |

16 |

2 |

| QDR |

32 |

4 |

| FDR10 |

40 |

5 |

| FDR |

54 |

6.75 |

| EDR |

100 |

12.5 |

| HDR |

200 |

25 |

| NDR |

400 |

50 |

| XDR |

800 |

100 |

| GDR |

1,600 |

200 |

It is worth pointing out that Ethernet speeds above 50GbE and all InfiniBand speeds are predominantly concerned with GPU-accelerated workloads such as AI & HPC, powered by brands like NVIDIA. You can learn more by reading our dedicated NVIDIA NETWORKING BUYERS GUIDE.

Types of Network Card

As previously mentioned a NIC usually now refers to the standard Ethernet network card found in PCs, workstations and basic servers. These are usually 1GbE or in some cases up to 10GbE, as this is sufficient for most regular office Microsoft Windows-based workloads. Where demanding workloads such as graphics or Linux-based scientific computing need faster network speeds and lower latencies, Smart NICs and Super NICs facilitate this. Data Processing Units (DPUs) - also sometime called Infrastructure Processing Units (IPUs) - add enhanced levels off offloading and management to aid throughput and efficiency further. This further explored further in the video below.

play_circle

play_circle

NICs

Although only a small component in an overall system build, the NIC can contribute to a huge uplift in performance. Basic PC or workstation NICs start with throughput speeds of 1GbE through a single port, scaling to 40GbE at the top end for server use, featuring two or four ports. All processing of data is performed either by the CPU(s) and GPU(s) installed in the server, and thus introduces latency as data is transferred around the server.

Smart NICs

A Smart NIC performs all the tasks of a regular NIC but in order to cope with higher throughput speeds a degree of off-loading reduces pressure on other components in the server. This means the network card itself performs some of the processing tasks, removing the latency usually introduced by the CPU, system memory and operating system. This off-loading is referred to as Remote Memory Direct Access (RDMA) for InfiniBand cards and RDMA over converged Ethernet (RoCE) for Ethernet cards.

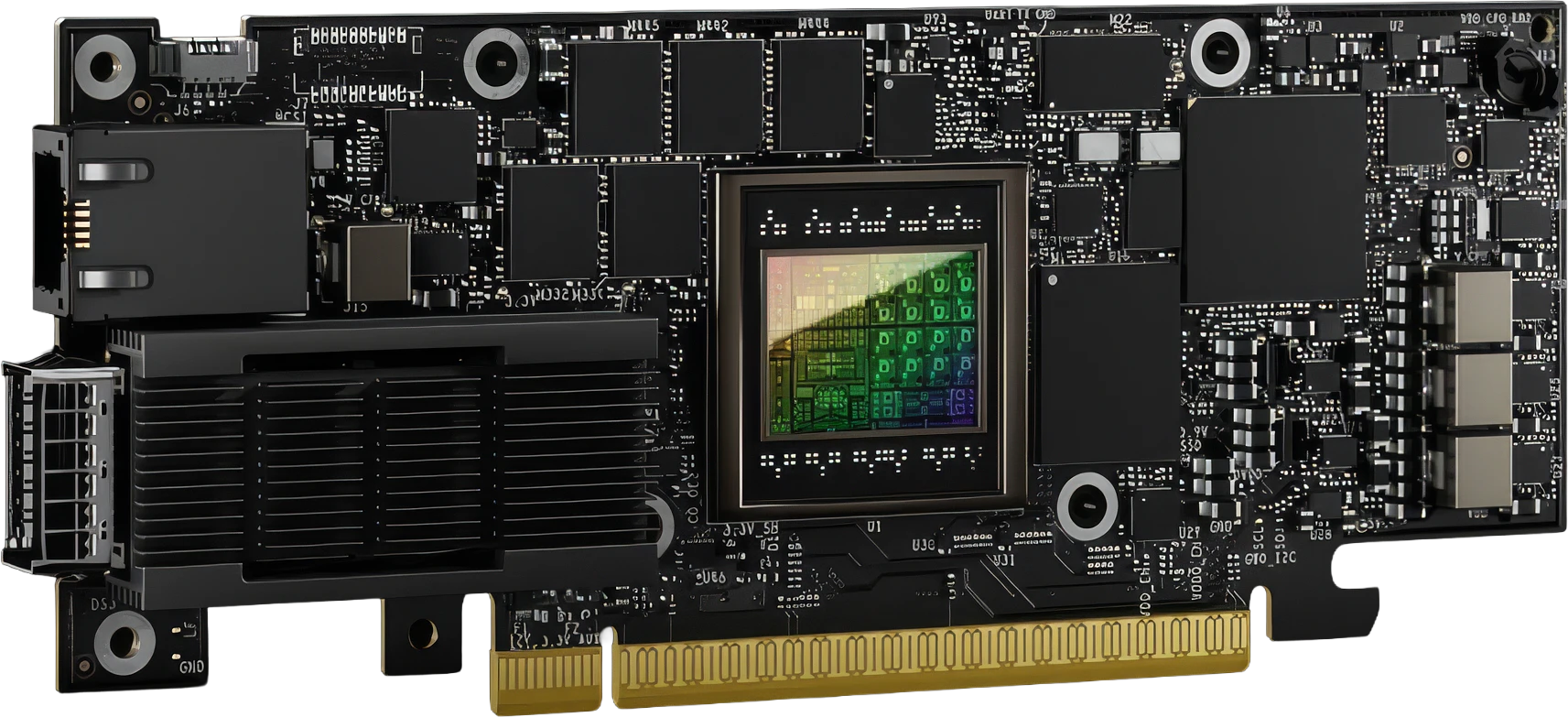

Super NICs

While a DPU and a SuperNIC share features and capabilities, SuperNICs are designed for network-intensive computing, providing RoCE network connectivity between GPU servers, optimising peak AI workload efficiency. As the sole purpose of the SuperNIC is to accelerate networking, it consumes less power than a DPU, which requires significant resources to offload applications from the CPU(s).

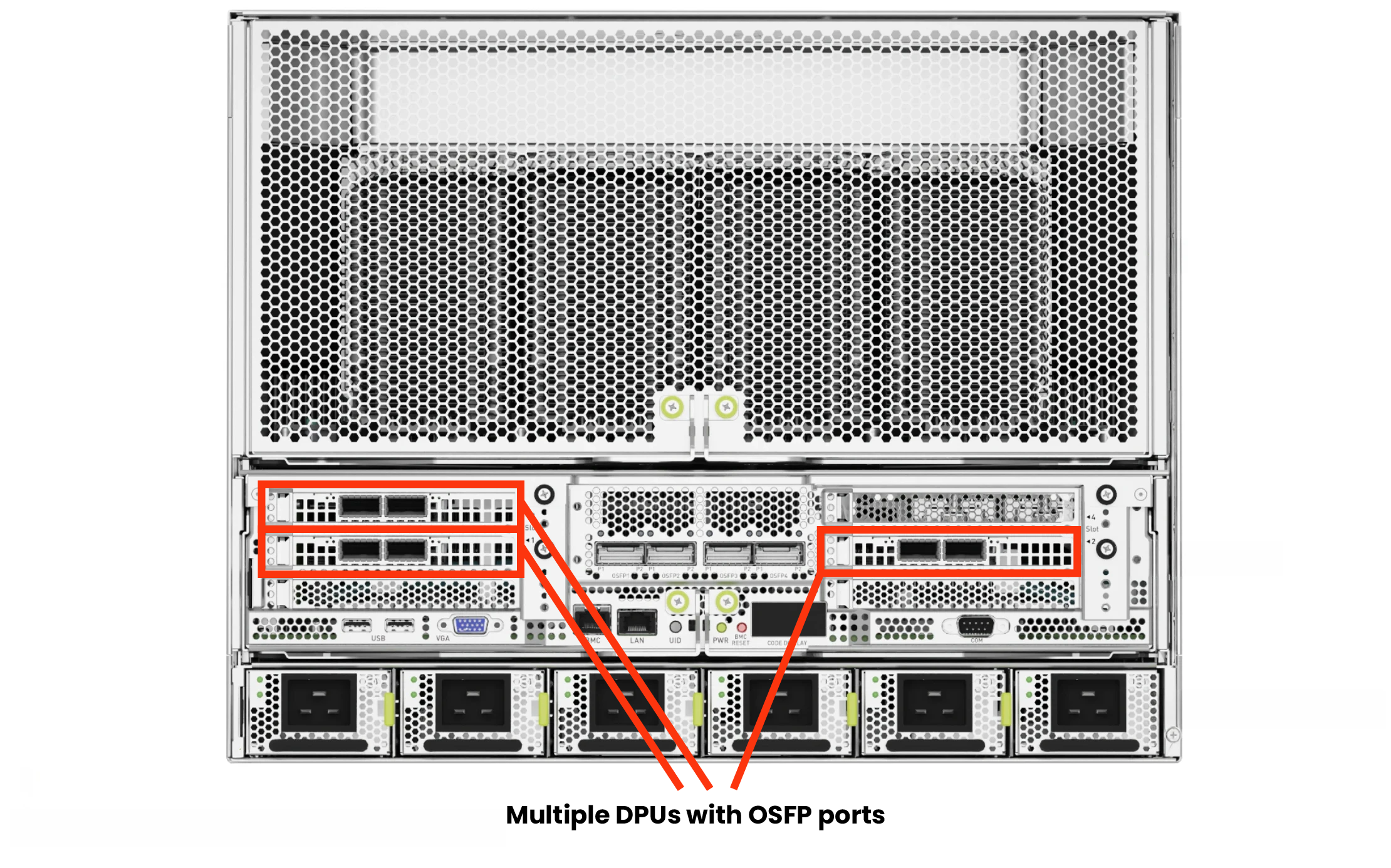

DPUs / IPUs

DPU or IPUs offer an uplift over SmartNICs or SuperNICs by offloading, accelerating and isolating a broad range of advanced, storage, networking and security services, including GPUDirect Storage, encryption, elastic storage, data integrity, decompression and deduplication. They provide a secure and accelerated infrastructure for HPC or AI workloads in largely containerised environments.

The below table summarises the features of the various types of NIC. It also covers power consumption and cost, as Super NICs and DPUs have higher power draw, so server’s will need to be suitably equipped to house them. Although these types of NIC cost more, it is usually justified by the mission critical workloads or demanding applications such as AI benefiting from the extra efficiencies they bring.

| Network Connectivity |

✔ |

✔ |

✔ |

✔ |

| Network Protocols |

Ethernet |

Ethernet / InfiniBand |

Ethernet / InfiniBand |

Ethernet / InfiniBand |

| Network Speeds |

1-40Gb/s |

50-200Gb/s |

200-800Gb/s |

200-800Gb/s |

| CPU Offloading for Network Functions |

✖ |

✔ |

✔ |

✔ |

| Memory Controllers |

✖ |

✔ |

✔ |

✔ |

| Network Accelerators |

✖ |

✔ |

✔ |

✔ |

| Storage Accelerators |

✖ |

✖ |

✖ |

✔ |

| Crypto Accelerators |

✖ |

✖ |

✖ |

✔ |

| Power Consumption |

Low |

Medium |

High |

Very High |

| Cost |

£ |

££ |

£££ |

££££ |

It is worth mentioning that your choice of network cards will also impact the network switches that they will connect to. Protocols and speeds need to be matched for optimal performance and some switch management features and software can have an impact on NIC performance and the wider network. You can learn more by reading our NETWORK SWITCH BUYERS GUIDE or explore SuperNICs and DPUs further by reading our NVIDIA NETWORKING BUYERS GUIDE.

Network Card Types & Interfaces

The most common form factor of NICs, Smart NICs, Super NICs and DPUs / IPUs is an add-in PCIe card. An exception to this universal PCIe format, is the Open Compute Project (OCP) format. The OCP is an organisation that shares designs of datacentre products and best practices among companies in an attempt to promote standardisation and improve interoperability - it is supported by many large networking manufacturers.

PCIe card with dual RJ45 ports

The most common type of network card, featuring two RJ45 ports for standard Ethernet connections.

PCIe card with dual SFP ports

A PCIe card equipped with two SFP ports, commonly used for fiber optic connections.

OCP card with dual SFP ports

An OCP form factor card featuring two SFP ports, designed for use in OCP-compliant servers and datacenter environments.

Most standard Ethernet NICs use RJ45 ports connected by copper cables, whereas Ethernet and InfiniBand Smart NICs, Super NICs and DPUs / IPUs will use transceiver modules - either SFP (small form-factor pluggable), QSFP (quad small form-factor pluggable) or OSFP (octal small form-factor pluggable) ports that use fibre optic cables for fastest data transmission throughput. The table to the left shows speeds and compatibilities of the various types of transceiver.

Not all types of transceiver are backward compatible with NIC ports due to varying sizes, so it is best to check individual network card specifications.

| 1G |

✔ |

✔ |

✔ |

✔ |

✔ |

✔ |

✔ |

✔ |

✔ |

| 10G |

✔ |

✔ |

✔ |

✔ |

✔ |

✔ |

✔ |

✔ |

✔ |

| 25G |

✖ |

✔ |

✔ |

✖ |

✔ |

✔ |

✔ |

✔ |

✔ |

| 40G |

✖ |

✖ |

✖ |

✔ |

✔ |

✔ |

✔ |

✔ |

✔ |

| 50G |

✖ |

✖ |

✔ |

✖ |

✔ |

✔ |

✔ |

✔ |

✔ |

| 100G |

✖ |

✖ |

✖ |

✔ |

✔ |

✔ |

✔ |

✔ |

✔ |

| 200G |

✖ |

✖ |

✖ |

✖ |

✖ |

✔ |

✔ |

✔ |

✔ |

| 400G |

✖ |

✖ |

✖ |

✖ |

✖ |

✖ |

✔ |

✔ |

✔ |

| 800G |

✖ |

✖ |

✖ |

✖ |

✖ |

✖ |

✖ |

✖ |

✔ |

Advanced Network Card Features

In addition to the management features mentioned above, there are various additional ways to improve the performance of your NICs. Explore the tabs below to find out more.

Redundancy

Redundancy when referring to network interface cards, usually means using multiple cards to make more than one connection to the network switch. This has two benefits - firstly, should one NIC malfunction the connection is not lost as the other NIC takes over. Secondly, whilst both NICs (and their respective ports) function normally data traffic can be load-balanced across the ports and NICs to create better throughput.

Cables

In line with selecting the correct NICs in all your connected devices, you also need to ensure that compatible cabling is also selected. RJ45 Ethernet cables are classified as either CAT 5, 6, 6e, 7 or 8 for differing speeds, whereas SFP, QSFP and OSFP cables are interchangeable for both Ethernet and InfiniBand but again, but can be either DAC - (Direct Attached Copper) - a type of cable that consists of a transceiver and cable combined together; or AOC - (Active Optical Cable) - similar to a DAC where the transceiver and cable are combined except it uses multimode or single-mode fibre instead of copper, providing longer distances and lighter weights when compared to DAC.

Software

Software provides an innovative approach to bridge the gap between servers, applications and fabric elements. Working independently or synergistically, it can improve monitoring, reduce latency, and offload CPU cycles to enhance the performance of applications for higher productivity, and improved business results.

Examples include NVIDIA HPC-X, an acceleration software that boosts performance by reducing latencies and increasing throughput. NVIDIA Unified Fabric Manager (UFM) is an end-to-end network management solution that enables monitoring, management, analytics and visibility - from the edge to the datacentre and cloud. Learn more about NVIDIA software by reading our NVIDIA NETWORKING BUYERS GUIDE.

Need Help Choosing?

If you would like further advice on the best connectivity solution for your system, don't hesitate to get in touch with our friendly team.

Frequently Asked Questions FAQ

Here are some common questions and answers to help you find the information you need.