The Ultimate Desktop For Developing Generative AI

The growing size and complexity of generative AI models requires systems with large amounts of memory and fast networking. The DGX Station GB300 provides datacentre-level performance in a desktop device. This makes it the ultimate office-based system for AI developers, researchers and data science teams.

The DGX Station GB300 is powered by the GB300 Superchip, which combines 72 powerful ARM CPU cores and a Blackwell Ultra GPU, served by a huge 784GB of coherent memory. This is supported by 800Gb/s Connect-X8 Smart NICs for connecting to datacentres or the cloud, supported by the NVIDIA Base OS and the NVIDIA AI Enterprise software stack, backed by specialist technical advice from NVIDIA DGXperts.

Enquire Now

Accelerated Generative AI with NVIDIA GB300 Superchip

The latest DGX Station features Grace Blackwell GB300 Superchip, a complete SoC comprising the Grace-72 Core Neoverse V2 CPU, which has 72 cores and 496GB of LPDDR5X memory and Blackwell Ultra GPU with 288GB of HBM3e memory. The CPU and GPU elements of the SoC communicating with one another over a 900GB/s NVLink C2C for incredible AI performance.

| GB300 | ||

|---|---|---|

| ARCHITECTURE | NVIDIA GB300 Superchip | |

| CPU | Grace Neoverse V2 | |

| CPU CORES | 72 | |

| CPU MEMORY | 496GB LPDDR5X | |

| GPU | Blackwell Ultra | |

| CUDA CORES | TBC | |

| TENSOR CORES | TBC | |

| GPU MEMORY | 288GB HBM3e | |

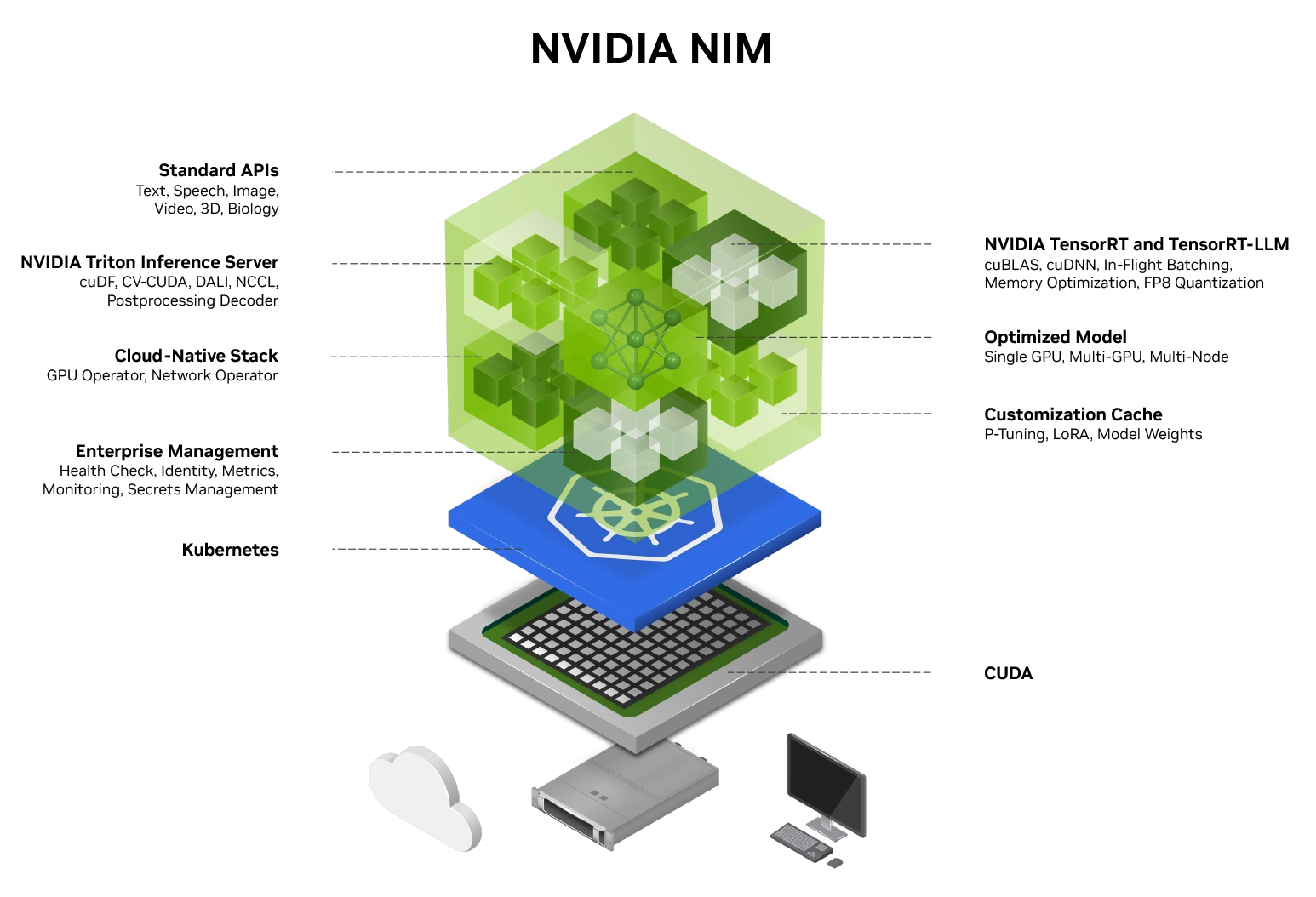

Generative AI-Ready Software Stack

NVIDIA Base OS powers the DGX Station GB300, enabling organisations to leverage the best of NVIDIA software innovation. Developers can unleash the full potential of their DGX Station with a proven platform that includes a complete AI software stack including access to NVIDIA NIM microservices and NVIDIA Blueprints, further enhanced by NVIDIA AI Enterprise.

NVIDIA AI Enterprise

NVIDIA AI Enterprise unlocks access to a wide range of frameworks that accelerate the development and deployment of AI projects. Leveraging pre-configured frameworks removes many of the manual tasks and complexity associated with software development, enabling you to deploy your AI models faster as each framework is tried, tested and optimised for NVIDIA GPUs. The less time spent developing, the greater the ROI on your AI hardware and data science investments.

Rather than trying to assemble thousands of co-dependent libraries and APIs from different authors when building your own AI applications, NVIDIA AI Enterprise removes this pain point by providing the full AI software stack including applications such as healthcare, computer vision, speech and generative AI.

Enterprise-grade support is provided, 9x5 with a 4-hour SLA with direct access to NVIDIA’s AI experts, minimising risk and downtime, while maximising system efficiency and productivity.

Enquire NowDatacentre-Level Performance In Your Office

Unlike most AI solutions the DGX Station GB300 has been purpose built for office environments. As a desktop PC styled device, it does not require the specialist power, cooling and racking of datacentre solutions, nor suffer from the loud noise associated with server systems. This means you don’t have to compromise the size of your data, the privacy of your proprietary models, or the speed at which you work before scaling out to the datacentre.

Start Your Generative AI Journey

The NVIDIA DGX Station GB300 is available with three- and five-year support contract, extendable at a later period. There are also comprehensive media retention packages available for more data-sensitive projects.

GPU: NVIDIA Blackwell Ultra

GPU RAM: 288GB HBM3e

CPU: NVIDIA Grace-72 Core Neoverse V2

CPU RAM: 496GB LPDDR5X

System Drives: TBC

Storage Drives: TBC

Networking: 800Gb/s NVIDIA ConnectX-8 InfiniBand/Ethernet

Power: TBC

Form Factor: Desktop PC

01204 474210