AI Storage by DDN

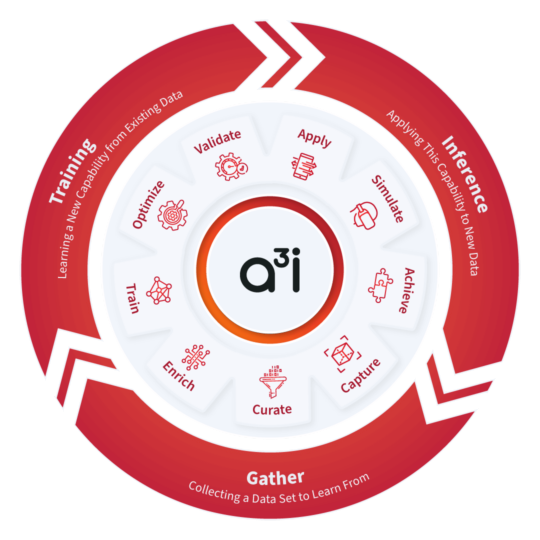

DataDirect Networks (DDN) is a leading big data storage supplier to data-intensive, global organisations. DDN’s A3I (Accelerated, Any-Scale AI) data management solutions are the ideal partner for high performance GPU-accelerated systems such as 3XS EGX, MGX and HGX servers and NVIDIA DGX AI appliances. Engineered from the ground-up for the AI-enabled datacentre, A3I solutions are optimised for ingest, training, data transformations, replication, metadata and small data transfers. They are fully optimised for all types of data structure, so they deliver data rapidly to applications, ensuring full GPU resource utilisation, even with distributed applications running on multiple servers or clusters.

Performance to Suit your Demands

DDN offers flexibility in platform choice with the AI400X3 and AI400X2 - both configurable as all-flash or hybrid flash and hard drive storage solutions. These leverage parallel access to high performance flash and deeply expandable archive HDD storage.

TLC Flash Systems

TLC-based AI appliances, built on DDN's EXAScaler parallel file system support the largest, most complex or transformer-based AI workloads, such as LLMs and deep learning recommender systems.

QLC Flash Systems

QLC-based systems offer greater storage capacity and density for better cost-efficiency than TLC solutions. Traditionally this would impact performance, but QLC combined with DDN's EXAScaler file system, 10x the performance of traditional QLC scale-out file solutions is delivered.

Archive Disk

Archival data remains essential too, especially in the context of AI applications, and needs to be accessible. DDN leverages unique SSD and HDD hybrid technology to minimise complexity while delivering both performance and cost-effective capacity.

A³I Cluster Configurations

To meet the requirements of a variety of workloads, the DDN A³I architecture leverages a choice of AI400X3 or AI400X2 appliances. These GPU-optimised parallel file systems deliver up to 140GB/s of throughput and over 4M IOPS to applications via 400Gb/s network connections.

Engineered to provide the highest data availability and maximum system uptime, all DDN hardware and software components are integrated as a fully redundant system. Using A³I nodes, the cluster can scale to over 10PB of data and is managed by the DDN Insight platform - a single interface to monitor arrays, filesystems, servers and applications - regardless of the size of system.

The DDN A³I Range

The DDN A³I AI400X2 - powered by DDN Insight - can drive workloads at the edge, in the datacentre or in the cloud. These nodes are available in a cost-effective all-flash form factor which deliver blazing performance to address the needs of the modern workloads of today and tomorrow.

| System | AI400X3 | AI400X2T | AI400X2 |

|---|---|---|---|

| Drive Bays (per 2U) | 24x 2.5in dual port | 24x 2.5in dual port | 24x 2.5in dual port |

| Drives Supported | 30TB, 60TB, 120TB, 250TB, 500TB NVMe | 30TB, 60TB, 120TB, 250TB, 500TB NVMe | 30TB, 60TB, 120TB, 250TB, 500TB NVMe |

| Sequential Read | 140GB/s | 115GB/s | 90GB/s |

| Sequential Write | 110GB/s | 75GB/s | 65GB/s |

| IOPS | 4M | 3M | 3M |

| Networking | 4x InfiniBand NDR / 400GbE or 8x InfiniBand HDR / 200GbE | 8x InfiniBand HDR / 200GbE | 8x InfiniBand HDR / 200GbE |

Ready to Deploy Your AI Infrastructure?

As a leading NVIDIA Elite Partner and the UK's only NVIDIA-certified DGX Managed Services Partner, Scan is ideally suited to design and deploy your GPU-accelerated compute & DDN-based storage cluster. Contact our AI experts for further information on 01204 474210 or on [email protected]