CPU Performance for Audio

The CPU sets how many plugins and effects you can use and ultimately how much performance we have overall

Which CPU is right for you?

A key component in any computer system, the CPU (Central Processing Unit) is where it all comes together. The CPU is responsible for getting the work done and when it comes to dealing with audio applications the CPU performance decides how many channels of VSTi’s and plug-in effects are available to you inside of your projects.

A common question is often which metric is more important, single or multicore performance? Historically, the best advice for the smooth running of effect chains has often been to pay attention to the single core, but the advice is often given without the entire context as to why.

Most DAW software works by processing entire channels on the same core or processing thread. This means that not only will the audio or VSTi with all of the effects within a channel be processed together, but also often it proves most efficient to also process associated channels together. This means that grouped instances and side-chained tracks may all end up being processed together for efficiency, as to minimize the latency caused by splitting and recombining the signal to be processed.

In any system the CPU’s base speed should be sufficient enough to handle any complex chain that you might require. Once you have that baseline established, the scope for performance turns to adding more cores and allowing you to process more channels at a time.

CPU performance tested

Over recent years, we have seen a rapid growth in performance as both AMD and Intel continued to develop and refine their latest chip designs. AMD through their highly scalable, large core count CPU's and Intel through their hybrid big.little chip design. Both methods offer a great deal of performance for an audio workstation and a sizable upgrade path over earlier models.

Crucially, the majority of DAW firms appear to have kept up with 24 core, 48 thread support being common and a number of packages going even beyond that. This brings us to our key question of which CPU offers the best performance value for your studio?

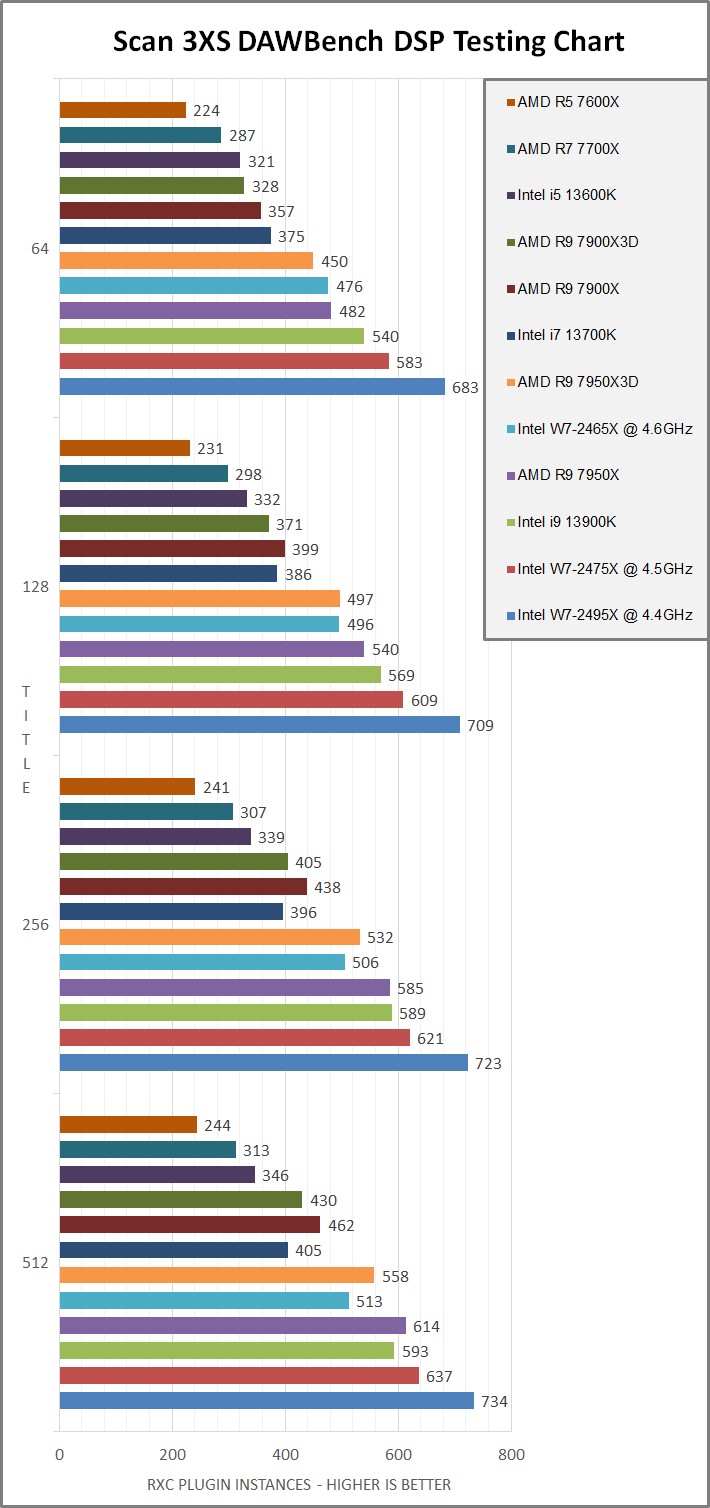

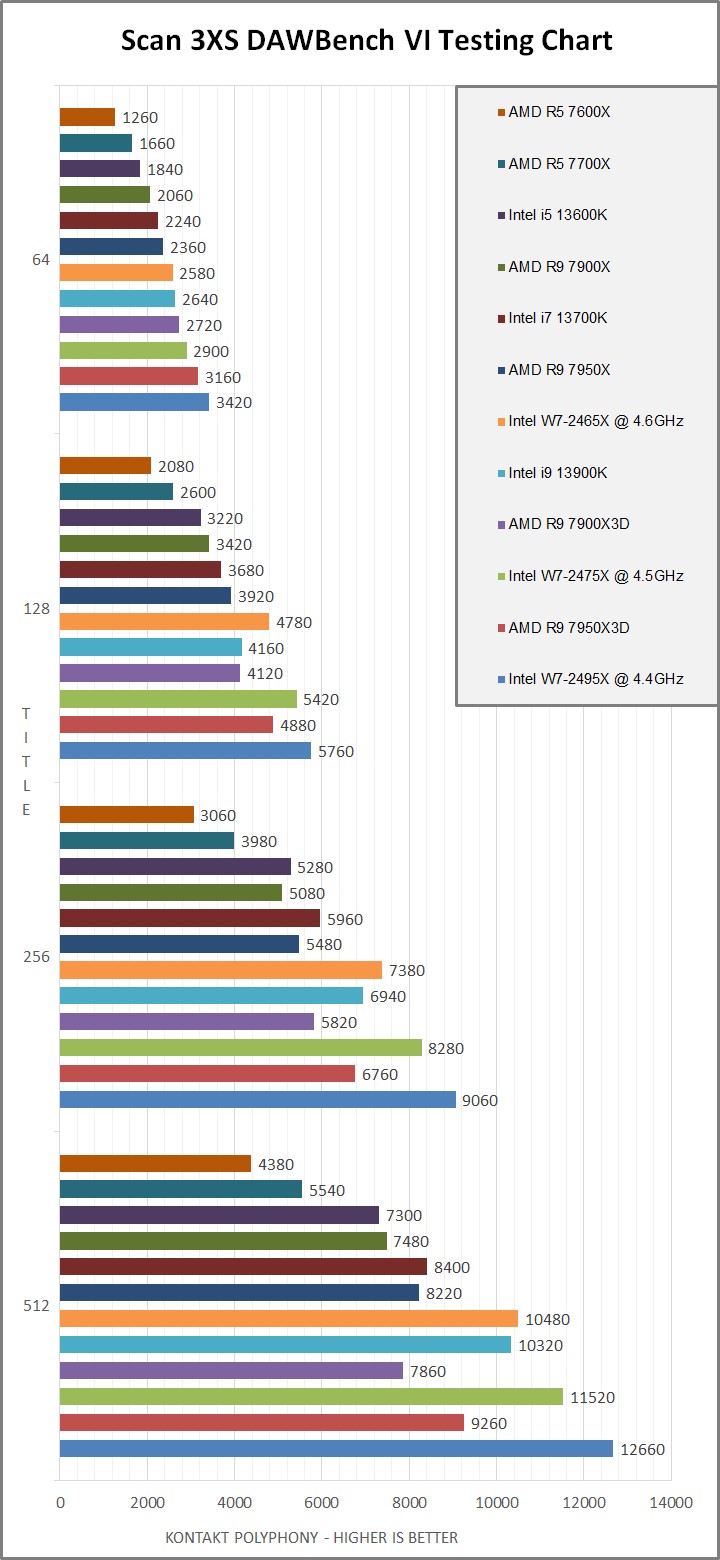

Our key test here in Scan uses the DAWBENCH suite, with Reaper and an RME Babyface as the basis for our testing platform. There are two versions of the test, with the first being the classic DSP test where we look to apply instances of the ReaXComp compressor plugin, until the audio starts to overload and corrupt. Similarly, the second test is built around Kontact, with a large memory footprint and loading up instances of polyphony once again until it overloads.

DAWBench DSP Testing Results

DAWBench VI Testing Results

We have 3 distinct platforms shown here in the testing results with the Intel 13th generation and AMD’s 7000 series both found in their respective mid-ranges, along with a number of chips taken from Intel’s 2400 series HEDT (High-End Desktop) platform.

The midrange chips are built into relatively small packages and cooling wise it’s advisable to keep them within their stock ratings to maintain a low noise profile. In comparison the 2400 series models are physically larger CPU’s by comparison which opens up some flexibility with the chip. Our testing results here includes the use of the ASUS’s overclocking profile for each CPU model, offering on average around 20% more performance over each chips stock rating, whilst still remaining extremely easy to cool.

The Intel 13th gen and AMD 7000 series are directly competing ranges, often ending up around similar price points when looking to configure competing models, such as the Intel 13900K and AMD 7950X. The Intel 2400 series by contrast sits above these cost wise, both as higher value chips and due to a reliance upon the supporting server grade hardware which carries its own premium cost.

Taking a look over the DAWBench DSP chart first, we find that on this more CPU performance focused benchmark, the 2495X and 2475X CPU’s are coming out ahead thanks to the larger core counts on offer and boosted performance profile. Next up they are followed by the Intel 13900K or AMD 7950X vying for the lead dependent on the buffer setting used, with the Intel having the advantage on tighter buffer settings. AMD has historically trailed a little behind the curve on the lowest buffer rates, although their move to DDR5 memory here has helped to narrow the gap on this generation.

The Intel 2495X sits slightly behind the 13900K whilst running its stock profile, due to its relatively low turbo performance found within the specification. With the ASUS boosted profile taking the 2495X from 3.3GHz to 4.4GHz it takes on a notable lead, showing the benefit of applying this high-performance profile. The DAWBench DSP test here helps to outline how performance is managed when dealing with generative soft-synths and processing effects, as well as being an important indicator for getting the best performance when recording at low latency.

The VI test by comparison has the Intel W7-2495X and AMD 7950X3D taking the top results. The VI test is based around Kontakt and measures usable polyphony within a test project. This can be affected by a number of factors, where CPU performance, RAM handling and in this instance the AMD 3D cache can all help to affect the results.

With Intel’s 13th generation the 13900K possibly offers the most rounded high performance offering here, with a strong showing in the DSP test and then only falling back a few places when it comes to the VI results, it still overall has good value and great performance for DSP heavy projects and reasonably mixed workloads. This is helped further by the addition of support for 48GB memory DIMMs being added to the platform, now allowing for up to 192GB of physical RAM to be added, previously an unseen amount at this level of chipset in prior generations.

The regular none “3D” cache AMD 7000 series offer similar value to Intel’s 13 generation, although trail their opposing chips in testing. Whilst offering slightly more reserved performance overall, this is balanced out by trading off a little performance for a notably lower power usage profile. For a quieter setup in a small recording space, the AMD 7000 series chips still offer plenty of performance overall, with the benefit of requiring less intensive cooling to run well.

AMD’s “3D” cache chips fall slightly behind their regular counterparts in the DSP testing, which given they have lower base clock speeds and a lowered thermal maximum, both changes done in order to help improve the 3D cache cooling. The other side of the coin, is that the cache itself can really help audio library handling, as demonstrated in the VI test where the “3D” cache revision pulls ahead each time, particularly on tighter ASIO buffer settings. The trade off may prove of interest to anyone considering AMD and dealing with a lot of audio libraries specifically, but for most other users the regular none “3D” versions of each chip may prove a better choice depending on current pricing.

This leaves us with the Intel 2400 series workstation chips. Unlike the 13th generation options, this platform uses a more traditional core arrangement, choosing to apply the same level of performance across the whole of the chip. The uppermost 2495X like the 13900K has a 24-core arrangement, although all equally performing cores with hyper-threading, as oppose to the 13900K mixture of 8 performance cores with hyperthreading and 16 efficient cores without. Whilst the stock configuration for the 2400 series chips came out as underwhelming due to their lower base clocks and relatively low turbo ratings, when run with the ASUS overclock profiles applied as we have in the results above, they take a more notable lead with the upper most 2495X sitting around 20 - 25% ahead of the pack.

Overall, it becomes a question of value and which setup can meet your needs. Money no object and the Intel W7-2495X with its extended memory and PCIe lane support may make sense for certain performance demanding workloads, but comes at a cost of more than four times the retail price of an Intel 13900K or AMD 7950X for the chip alone. In contrast, the result tables help to illustrate the degree of performance and relative value on offer with the current generation of midrange CPU’s. For the price of just a W7-2495X workstation CPU, you could build yourself a whole system based around a superbly performing midrange chip from either Intel or AMD that is likely to meet your studio’s requirements for many years to come.

Across the rest of the range, even the lowest entries on our chart, far exceed the performance available in the high-end even just a few years ago. Amongst CPU’s found in the same range, the single core performance is often similar, with just the addition of more cores on each model as we move up the range, putting the focus more on deciding on the overall performance required to support your projects. For someone recording demos and small band arrangements, even an entry level system can achieve the required low latency performance, whilst being able to work in real-time with 50+ instrument channels or heavily processed audio tracks. For those working more in the box, expanding to one of the larger chips gives you the potential of 100’s of channels complete with complex processing chains within your projects.

Without a doubt all of the platforms here offer superb performance for the studio in their current incarnations, reaching a level previously unheard of even just a few years ago. If you find your current system is running out of steam, speak to us about the best way to get the power you want from your next studio machine.